Welcome to the greatest guide on the internet on how to do an SEO audit.

Yes… that’s a huge statement but I fully stand by it. By the end of this guide, you’ll be able to analyse a website VERY deeply and extract the most important issues.

BE WARNED: This guide gets deep and dirty so be prepared to roll up your sleeves and be ready to put some elbow grease in.

I’ve created this to be a checklist you can easily go over. This is a guide you can hand over to an SEO junior and they’ll have a much better grasp on how to perform an audit.

By the end of this guide, you, your junior SEO, SEO seniors will have a greater grasp on how to do a technical, content and off page audit..

Every time you’re stuck, refer back to any section on this guide and you’ll know what to do next for both your audit and it will help create an SEO strategy.

I’ve been doing SEO for 10 years, worked on many different websites and I’m currently an SEO manager. What you learn here is what I do when I’m helping paying clients…

…and I’m handing this to you on Thursday, March 12th 2026 for free, no strings attached. SEO courses that sell for thousands don’t scratch the surface of what I go over in this guide.

So get your hot chocolate out, turn off any distraction and get locked in.

HERE WE GO!

Table of Contents

How to setup Screaming Frog

Screaming Frog is the most useful technical SEO tool I’ve ever used in my entire life and many SEOs say the same thing.

Once you’ve set up Screaming Frog, you’ll be able to do a deep crawl into your website and bring important issues to the forefront.

First of all, know that there’s a free version of Screaming Frog and a paid version. Do yourself a favour and get the paid version otherwise you can’t follow along. It’s well worth it.

1. Open up Screaming Frog.

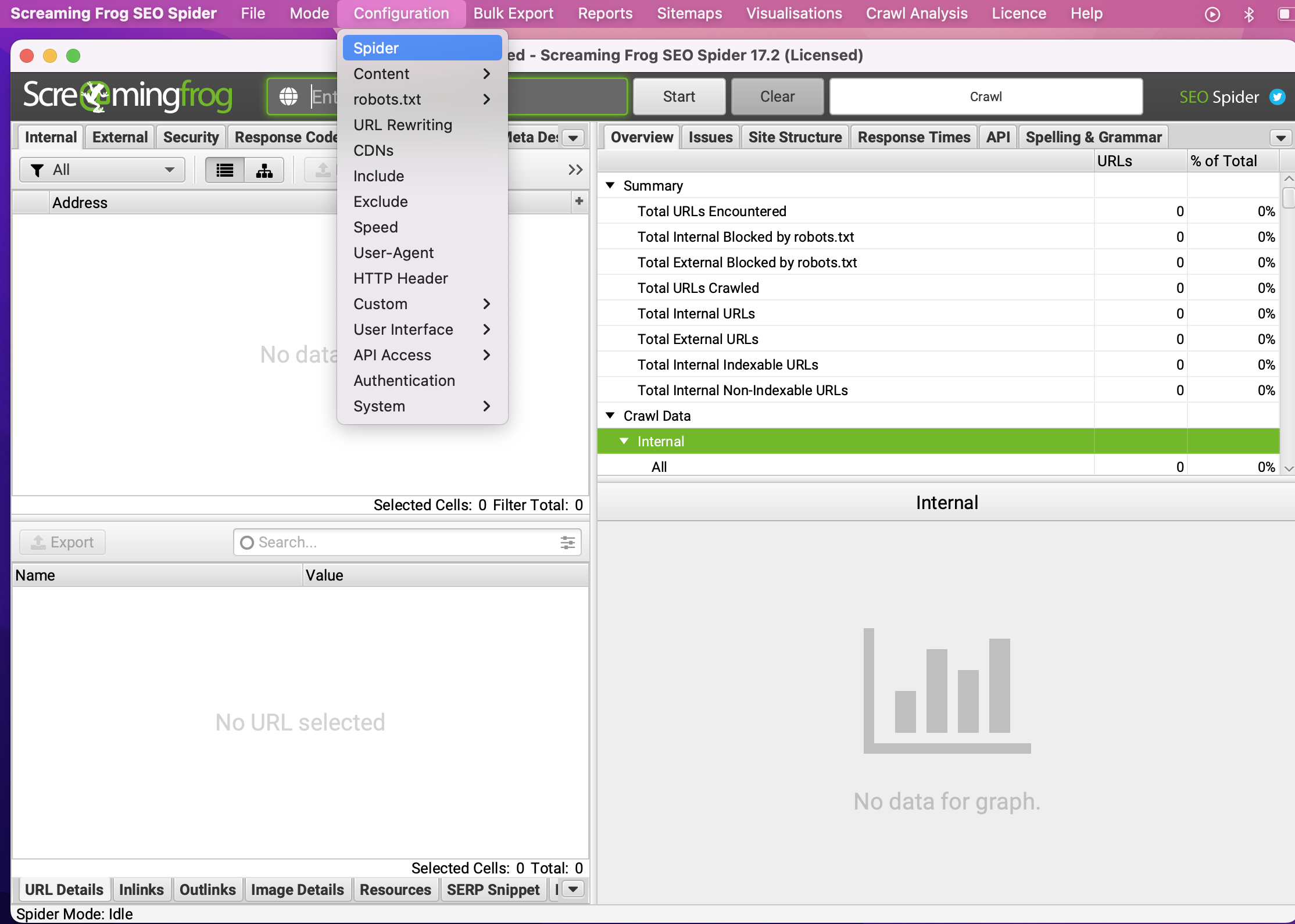

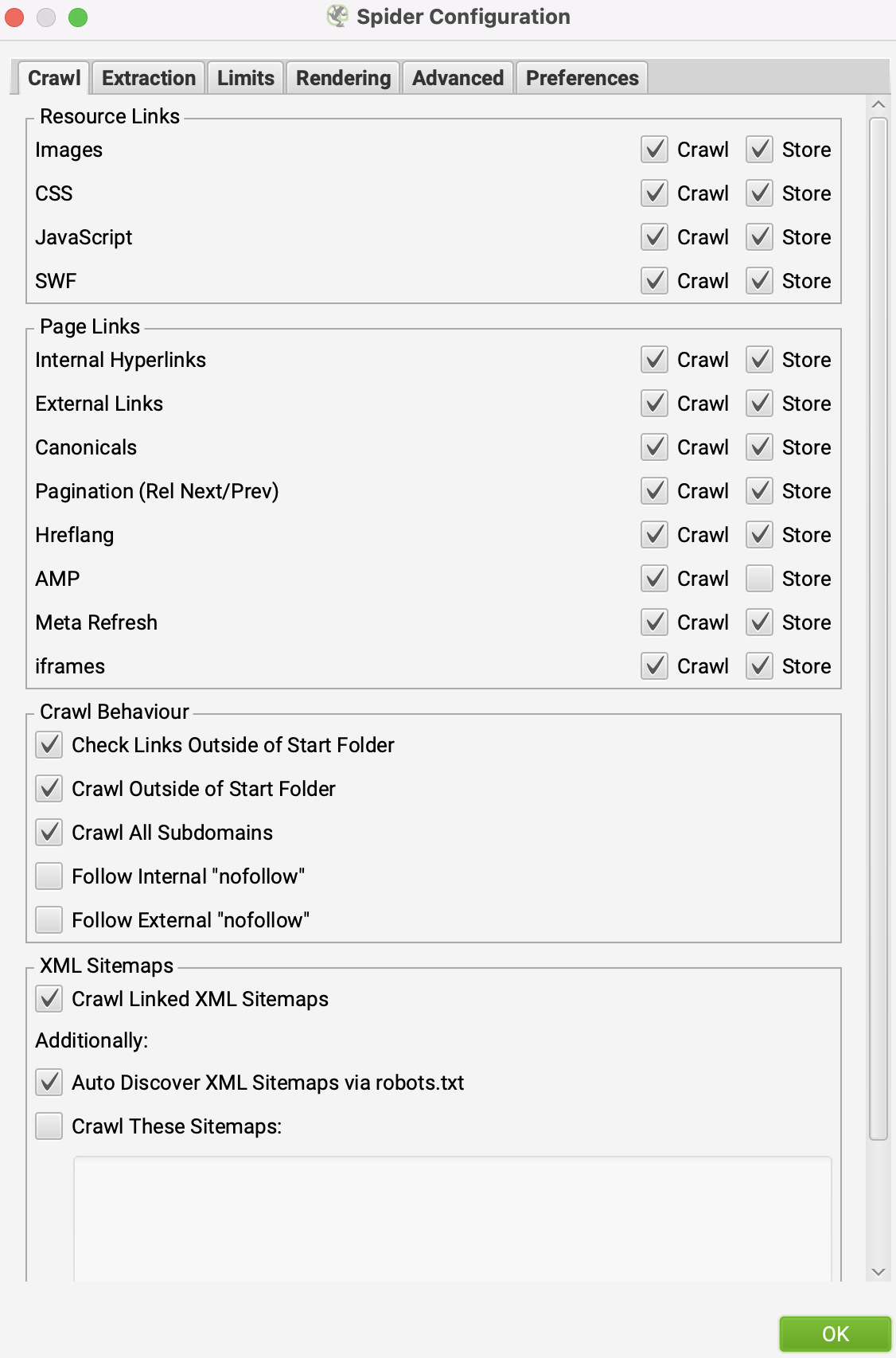

2. Click on ‘Configuration’ > ‘Spider’ and copy the configuration below and click ‘OK’ (if you know what your sitemap link(s) is, you can also include that.)

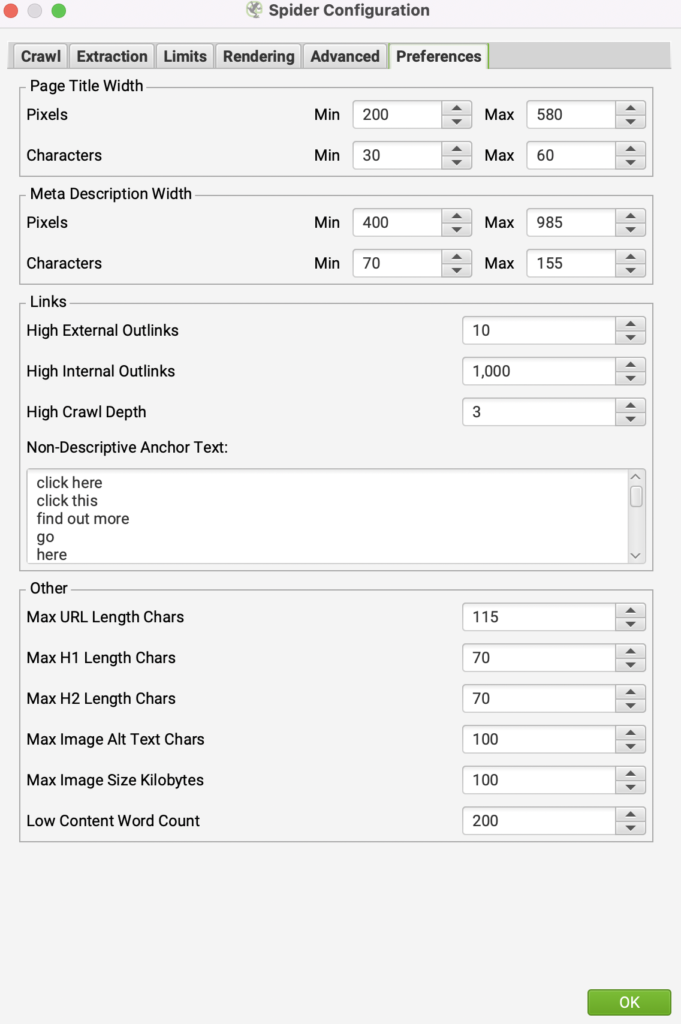

3. Click on ‘Configuration’ > ‘Spider’ > ‘Preferences’ tab and copy the below and click ‘OK’

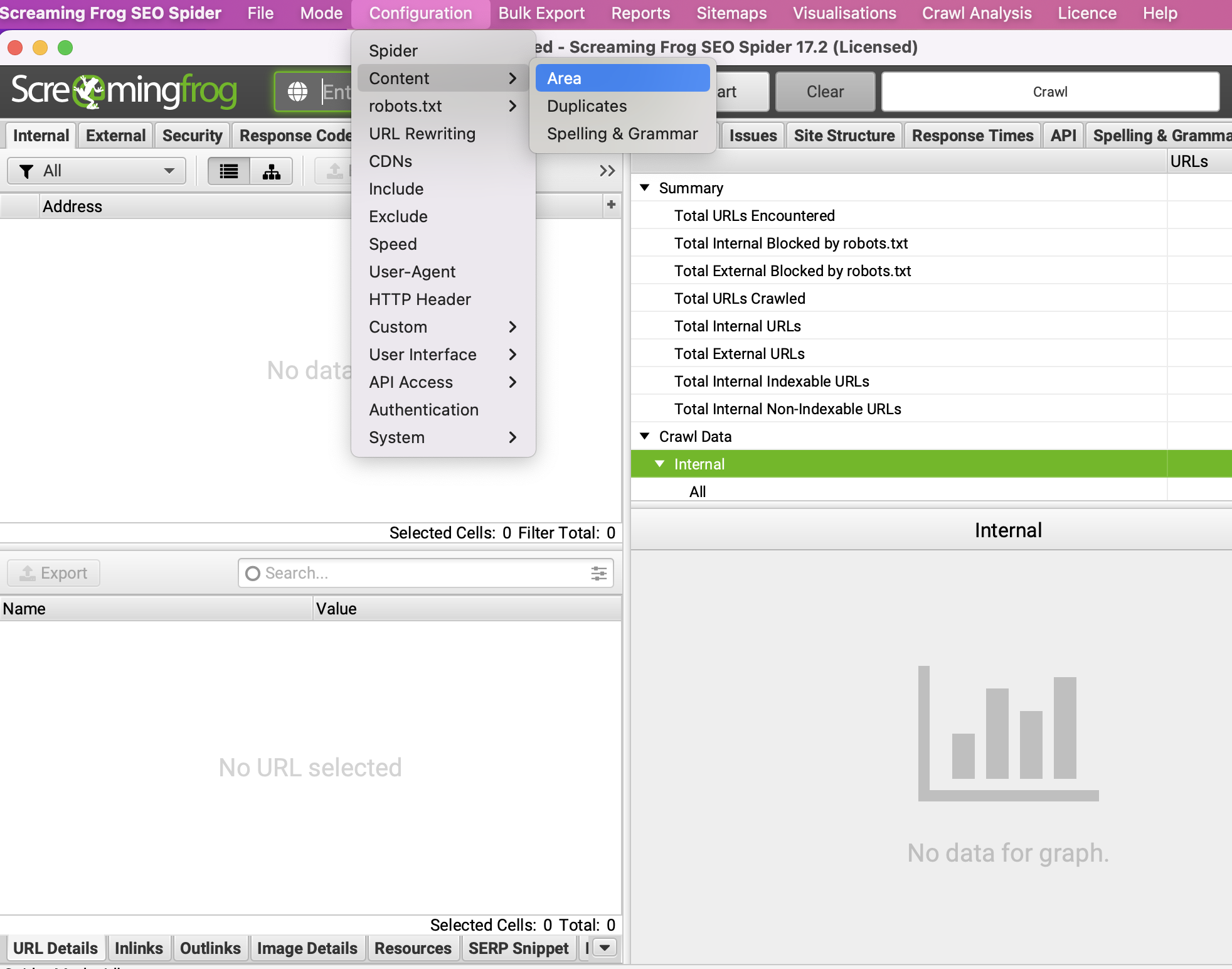

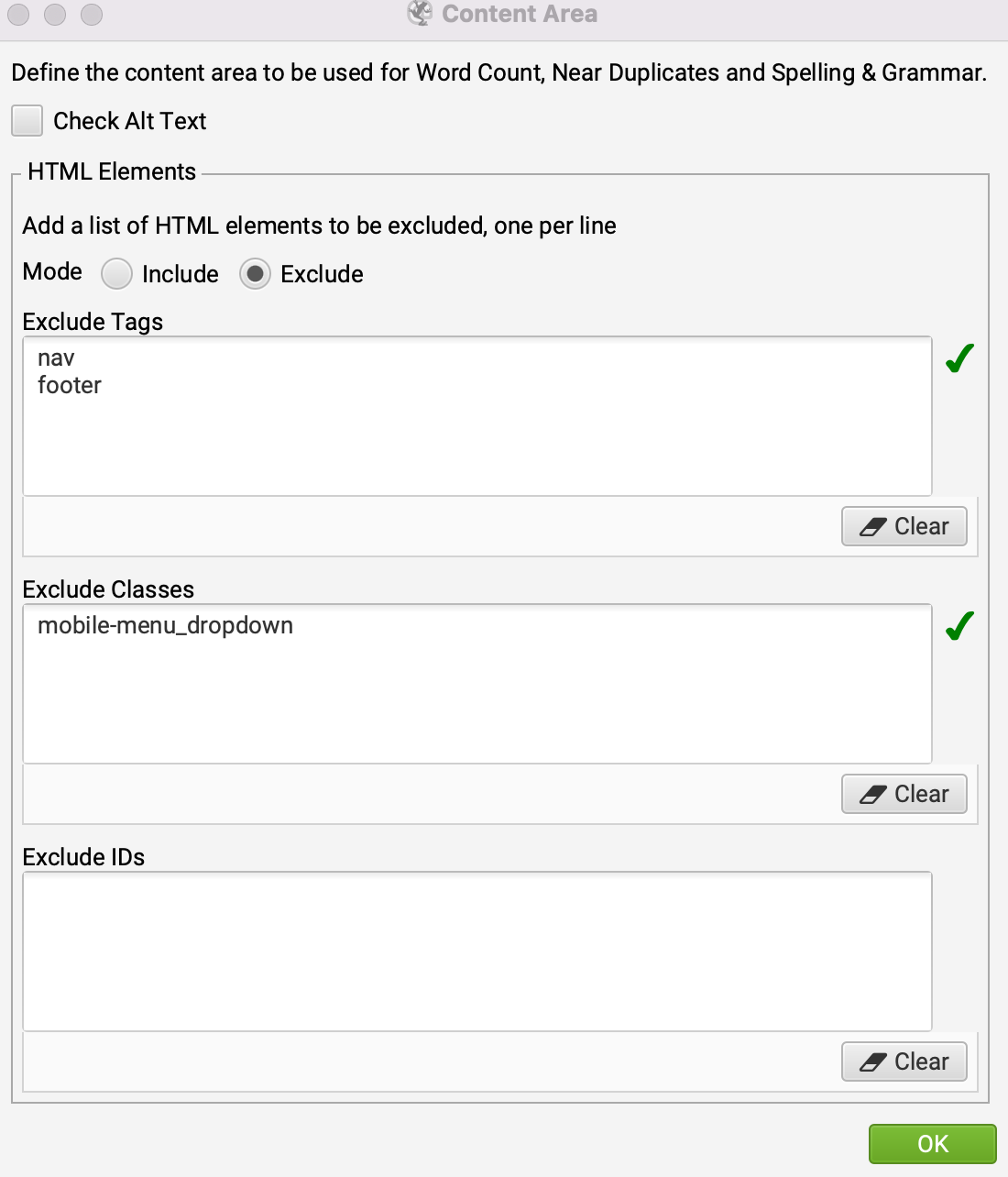

4. Click on ‘Configuration’ > ‘Content’ > ‘Area’ and copy the below and click ‘OK’

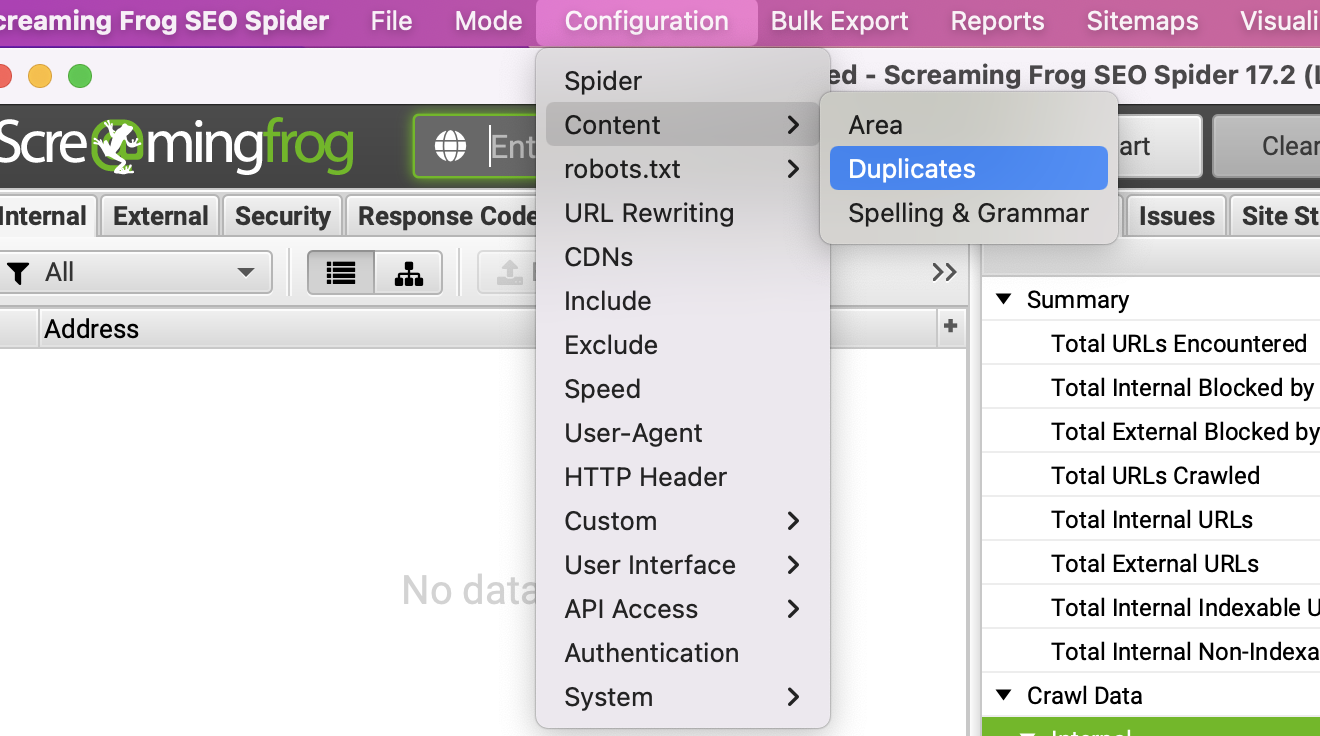

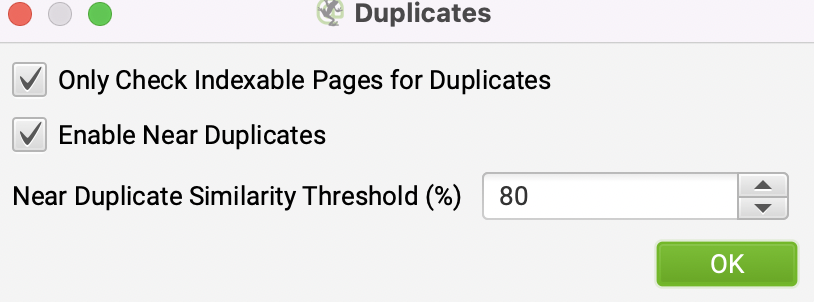

5. Click on ‘Configuration’ > ‘Content’ > ‘Duplicates’ and copy the below.

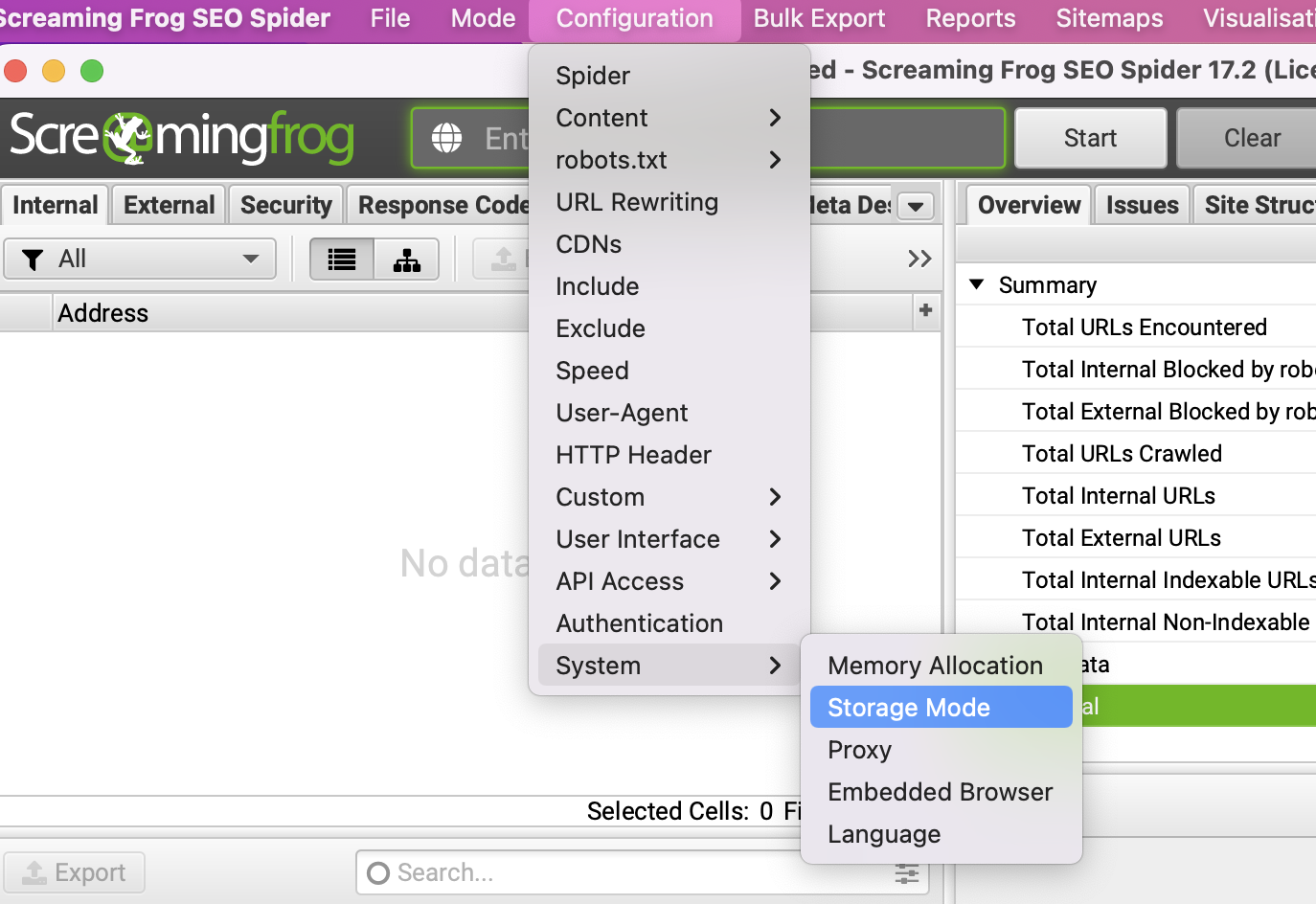

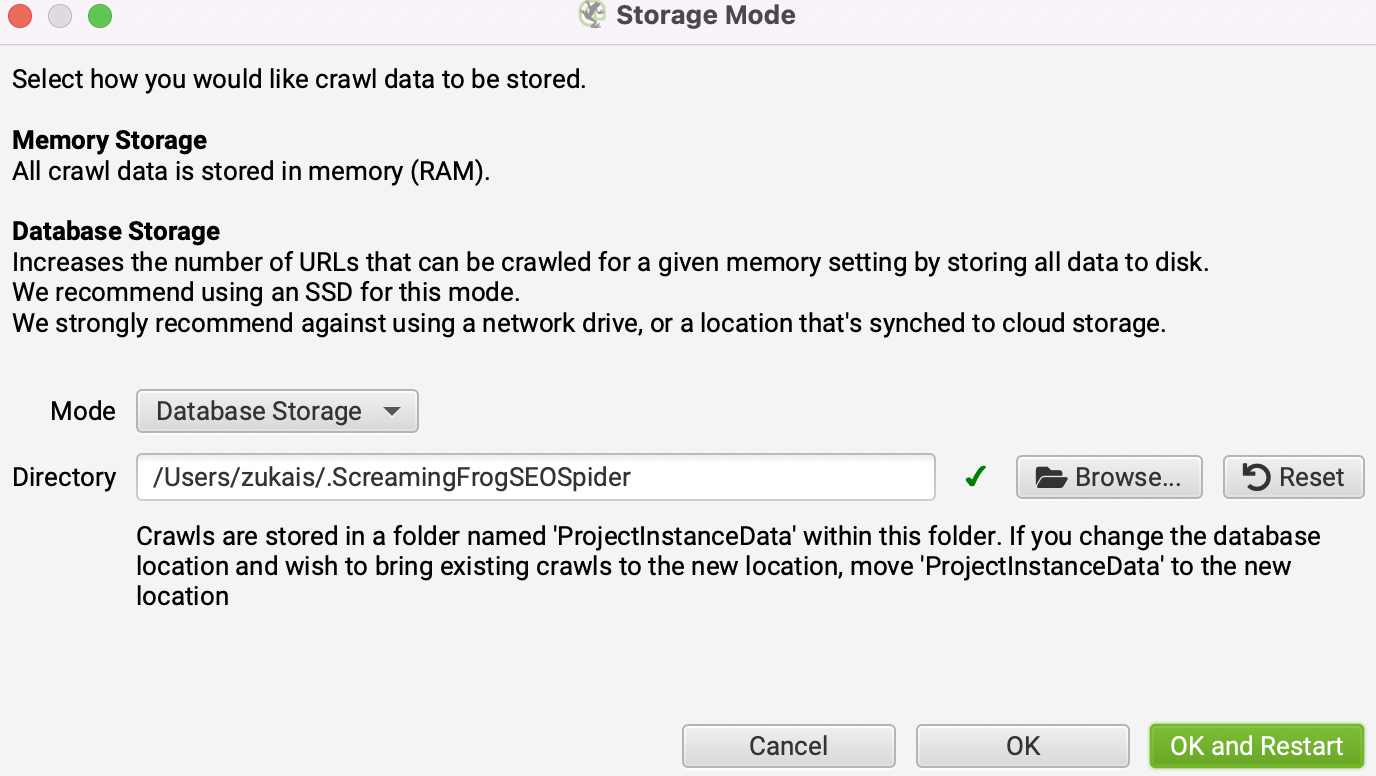

6. Click on ‘Configuration’ > ‘System’ > ‘Storage Mode’ > and change it to ‘Database Storage’ and click OK.

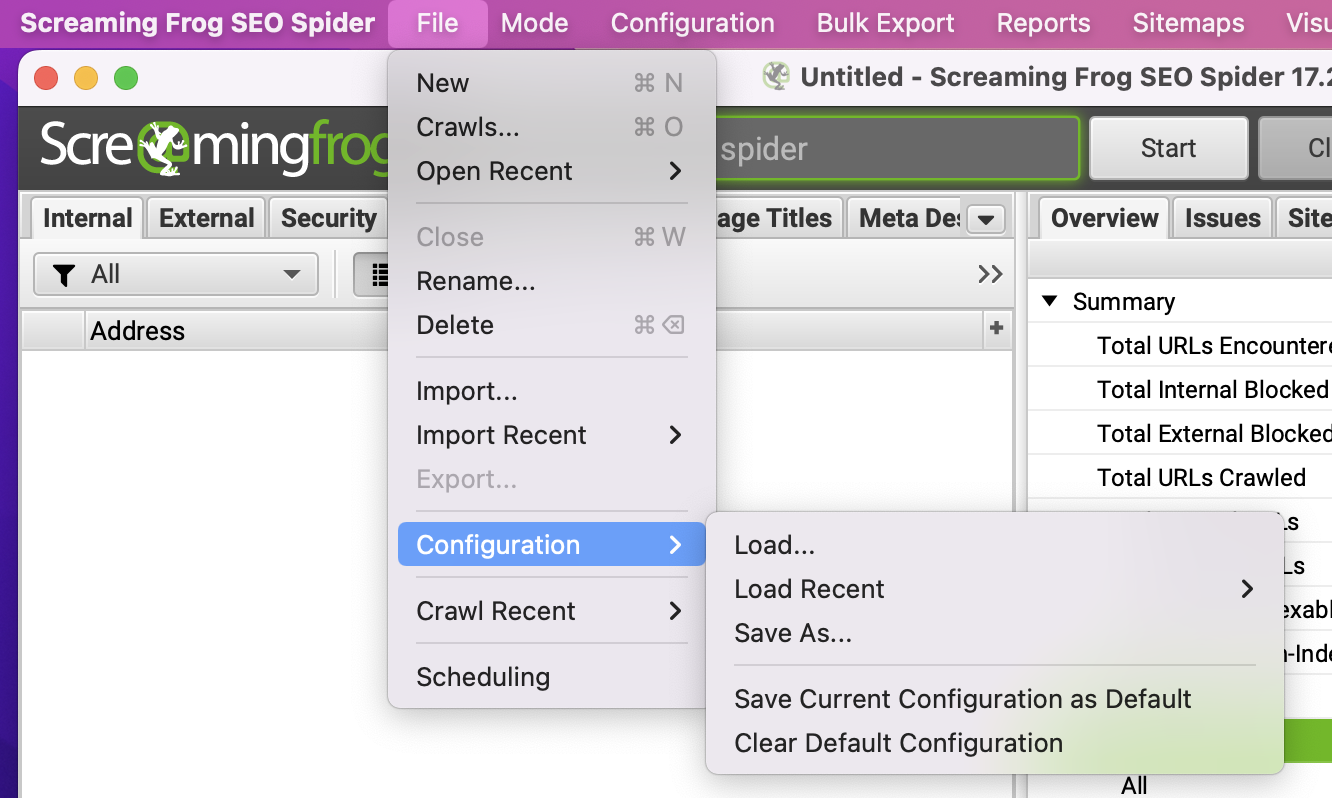

7. Click on ‘File’ > ‘Configuration’ > ‘Save Current Configuration as Default’

8. Exit Screaming Frog and open it up again and you’re done!

Now these settings have been saved, you don’t need to configure them again unless you update the tool.

How to run a Screaming Frog Crawl

- Put your root URL into Screaming Frog e.g. yourwebsite.com instead of https://yourwebsite.com and click start.

- After the crawl has finished, click ‘Crawl Analysis’ > ‘Start’

- After the crawl analysis has finished, click on ‘File’ > ‘Configuration’ > ‘Save as…’ and save the file where you want. And you’re all done!

Everytime you run SF, go through the above.

NOW! Let’s get into the meaty stuff…

This is where the actual auditing begins.

Navigation Checks

What is Navigation and why does it matter?

The navigation part of the website is all about how users and search engines navigate through your website. Are the users and search engines able to get to pages they need in an efficient manner?

Does the search engine have to dive deep into your website, clicking link after link to get to a certain page?

Is the user able to get to the page they want within 3 to 4 clicks?

This is a critical part of your website, you must make sure your website is very easy to navigate, otherwise both users and search engines will leave your site and it will be stuck in limbo.

Here, we’ll take a look at each part of the navigation journey that users and search engines take.

1. Valid XML sitemap

What is an XML sitemap and why does it matter?

An XML sitemap is a way to inform search engines that certain URLs should be crawled and indexed. It’s a file which lists the pages you want indexed.

An XML sitemap is a map for the search engine, it helps the search engine understand your website better.

Without it, the search engine will have no instructions from you on where everything is located. A sitemap makes it easy for the search engines to find their way around.

You may be able to view your sitemap if put /sitemap.xml at the end of your website. For example, yourwebsite.com/sitemap.xml.

Recommended tool

Screaming Frog.

How to

In this section, I’ll go over the dos and don’ts of the XML sitemap. What it should look like, what should be included and what should be excluded.

Break up the sitemap

The first thing is organisation. Make sure things are categorised, this will make it a lot easier when you decide to dive into your sitemap and do an audit.

There should be a sitemap for:

- Posts

- Pages

- Images

- Products

- And you can add more in terms of categorisation.

Format

When you look at your sitemap, it should be in this format:

<?xml version=”1.0″ encoding=”UTF-8″?>

<urlset xmlns=”http://www.sitemaps.org/schemas/sitemap/0.9″>

<url>

<loc>http://www.example.com/foo.html</loc>

<lastmod>2018-06-04</lastmod>

</url>

</urlset>

You don’t need to have the <lastmod> tag if you don’t want to.

It looks pretty complicated and if you’re unsure, speak to a developer on what this means.

Pages to be excluded in the sitemap

Non-canonical pages: Each page you want to be discovered and indexed should have a canonical tag (self-referencing canonical tag). We’ll talk about canonicals later.

What is a self-referencing canonical?

A self referencing canonical is a page that has a canonical link and that link is to the same page.

Duplicate pages: Let’s say you’ve got a product page, ‘5 inch chainsaw’ and another product page which is, ‘6 inch chainsaw’. All of the content is the same except one page mentions 5 inch variation and the other mentions a 6 inch variation.

All of the content is the same except that one detail, this isn’t enough to be considered unique.

Those pages should be consolidated into a single page or unique content should be written for each page.

In your Screaming Frog crawl, go to the ‘Content’ tab, click on the ‘Filter’ bar to see Exact and Near Duplicates.

Check and see if these pages appear in the sitemap.

Paginated pages: Paginated pages should not appear in the sitemap.

Paginated pages are pages such as yourwebsite.com/blog/page2. There’s no need to include these types of pages.

Parameter or session ID based URLs: Parameter URLs are URLs that have a question mark in them (?) with an equals sign (=) inside the URL also.

They’re used to modify content. For example, if you’re viewing a white shirt and click on the black shirt variation, this can change the URL’s parameter. Also, tracking which source traffic came from can also be part of a parameter e.g. https://www.examplewebsite.co.uk/?utm_source=newsletter&utm_medium=email

Site search result pages: When you use the search function on a website, those results should not be in the sitemap.

For example, if you searched ‘bob’ in your website’s search box, it would display a URL such as this, examplewebsite.com/?s=bob.

Reply to comment URLs: Sometimes, comments can be their own URL. Make sure none of these appear in the sitemap. You can find the URL of the comment by clicking on the date or author.

Email URLs: There’s no need for email links to be in the sitemap. For example, if you clicked on an email link such as ‘bob@bobsblog.biz’ the page URL would look like this, mailto:bob@bobsblog.biz.

URLs created by filtering that are unnecessary for SEO: URLs created by filtering may not need to be in the sitemap unless you want it indexed and ranked.

For example, let’s say on your product page there is a small executive desk. On that page there is an option for a large executive desk. You may or may not want that page to rank. If you do, include it in the sitemap, if not, exclude it.

Certain Archives: Archives are a way to order types of pages on your website. Archives can be things like categories, authors, location, tags etc.

Certain archives you may want in your sitemap and certain archives you won’t. For example, it’s good practice to have your categories in the sitemap but not tags. Tags can easily lead to keyword cannibalization.

Any redirections (3xx), missing pages (4xx) or server error pages (5xx): Errors such as 404 (broken pages) should never be in the sitemap. Google treats these pages as noindex and including them in the sitemap can confuse search engines and they provide no value.

Pages blocked by robots.txt: By blocking pages in the robots.txt, you’re telling Google you don’t want that page indexed.

You may be able to access your robots file by putting /robots.txt at the end of your website. For example, yourwebsite/robots.txt.

We will talk about the robots.txt file later in this guide. And trust me, it’s not as complicated as it sounds.

Pages with noindex: Pages with the noindex tag is telling Google you don’t want those pages to be indexed in the search results.

In Screaming Frog, click on the ‘Sitemaps’ tab and click on ‘non-indexable URLs in the sitemap.’

Exclude these URLs from the sitemap.

Resource pages accessible by a lead gen form (e.g., white paper PDFs): You may not want certain PDFs to show in the search results and some you might want to show.

Certain utility pages: Certain utility pages you may not want to appear in the search results such as the privacy policy.

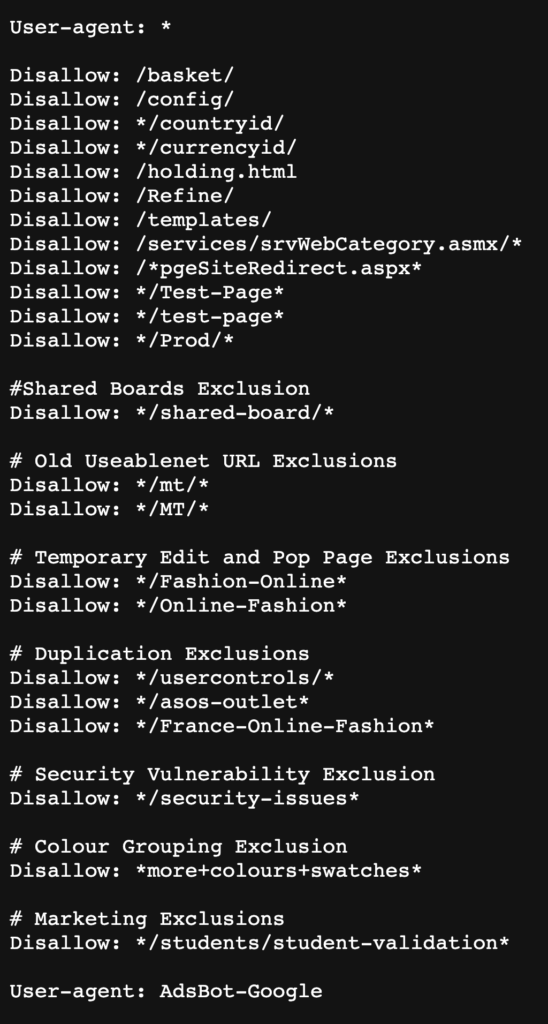

2. Valid robots.txt file

The robots.txt file is a file which communicates with different entities such as search engines and tells them how the website should be accessed. Which pages can be accessed and cannot be accessed.

For example, if you decide to block the login page in the robots.txt file, search engines won’t crawl it, therefore it will take load off your server. Also, it won’t appear in the search engine results.

The primary purpose of the robots file is to ensure your website isn’t overloaded with requests. But SEOs also use it to prevent pages from appearing in the Google index.

It was made to stop crawlers slowing and crashing your website. For example, some people have reported that the Screaming Frog tool has slowed and even crashed websites. This is because the Screaming Frog crawler was making too many requests for the website’s server to handle.

If you intend to prevent a page from indexing using the robots file, that page can still appear in the search engines if it’s linked to. As mentioned, it was made to stop your website from being overwhelmed and not hide pages.

To prevent pages from being indexed, use other methods such as applying the noindex tag.

You can access your robots page by use /robots.txt at the end of your website. For example, yourwebsite.com/robots.txt.

A deeper understanding of the robots file

The robots.txt might not be obeyed by all crawlers. Your robots file gives instructions but that doesn’t mean all of the crawlers will follow those instructions.

The Google bot and other responsible crawlers will obey you, others won’t.

Think about it, if there are pages you want restricted, the robots file can be an easy target for people to see which pages you don’t want seen. That’s why it’s best to use a password to protect these types of pages.

For example, if you have a paid course, you don’t want everyone getting access to it by seeing it in the search results. But the robots file might give away the location. That’s why it’s better to have a password on that type of page.

Different crawlers can interpret the instructions/syntax differently

To learn these syntax, check out what Google says about them. There are 3 main syntax you will use:

- User-agent:

- Allow:

- Disallow:

User-agent: – The user agency (also referred to as a directive) is the entity crawling your website. For example, a user-agent could be Google.

User-agent: Googlebot

If you want to allow all entities to access your website, you would put:

User-agent: *

And from here you can choose what you want them to access and not have access to.

Allow: – This is a URL path that will be allowed to crawl. It is used to counteract the disallow directive.

Allow: /about-us

All paths/URLs/pages on your website will be treated as an allow directive unless you have specifically set a path/URL/page in the disallow directive.

For example:

User-agent: *

Allow: /action-figures/dragon-ball

Disallow: /action-figures

In this example, the action figures directive is not allowed to be accessed except from the /action-figures/dragon-ball directive.

NOTE: When using the disallow and allow together, do not use wildcards as this can lead to clashing directives.

For example:

User-agent: *

Allow: /directory/

Disallow: *.html

Disallow: – These are paths/pages/URLs you don’t want any bot to crawl. You can give different user-agents different instructions.

Note that your robots.txt file is publicly available. Disallowing website sections in there can be used as an attack vector by people with malicious intent.

Robots.txt best practices

Location and file name

The robots.txt file should be in the very root of the website. It should be in the top directory of the host with the filename robots.txt.

Order priority

Remember, crawlers can decide to ignore or obey the robots file if they want. With Google, you have to be very precise with what you want the Google bot to do.

For example:

User-agent: *

Allow: /services/SEO/

Disallow: /services/

In this example, bots will generally obey not accessing /services/ pages which include /services/SEO.

However, Google will access /services/SEO/ directory but not the /services/ directory. This is because you have specifically allowed it as a directive.

Only one set of directives per user-agent

Having multiple directives per user-agent can confuse it. Keep it as simple and precise as possible.

For example:

User-agent: *

Disallow: /product/

This example won’t allow any search engine access to your products which of course, is not a good thing. So be careful as a misuse can destroy your search rankings.

Directives for all user agents and including directives for an exact bot at the same time

Let’s say you’ve got directives for

User-agent: *

And

User-agent: Googlebot

What will happen?

If you specify directives for a specific bot, those specific bot directives will be taken over the general directives.

Check if Google can crawl a folder or page with their robots tester. You can also use this as a testing ground for building a new robots file you wish to publish.

Use Google’s robots tester

As mentioned above, Google has their own robots testing tool. Use it to see if the robots file is doing what you want.

Robots file audit checklist

- Take a look and see if anything looks wrong after you’ve read the above.

- Make sure to include the ‘User-agent:’ before the disallow.

- Make sure the sitemap(s) is listed in the robots file. Insert it at the very bottom.

- Separate the user agent and directives with a line break.

- Redirects to the correct version of the robots file (http versions should redirect to https)

- Leave a comment to help make things easier to understand (use the hash symbol #)

3. Do all the protocol versions redirect to the correct version?

What is this and what does it matter?

Protocol versions of a website means:

- http://www.

- https://www.

- http://

- https://

If the protocols are not redirecting to the correct version, this will lead to duplicate content, potential crawl issues and keyword cannibalization.

Check different page types for these protocols and don’t forget to check if the robots.txt protocol redirects to the correct version.

Recommended tool

How to

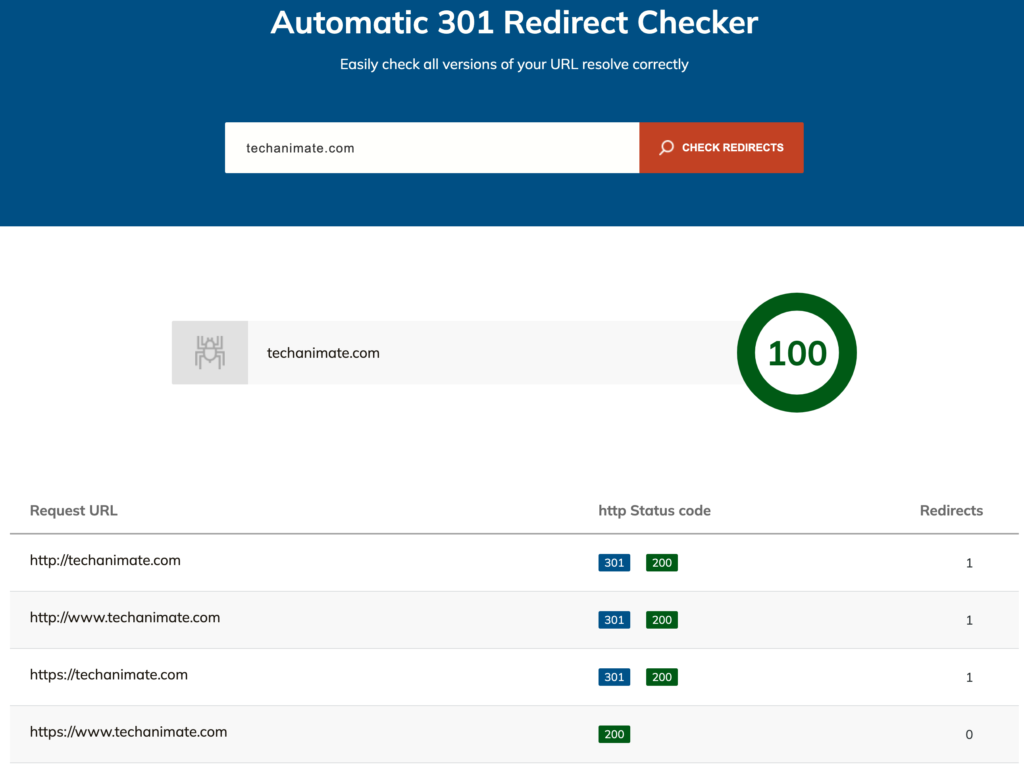

First, you want to type each of the 4 protocol versions of your site into the address bar. Does the http://www. and http:// redirect to one of the https versions? If so, that’s a pass.

Now, out of the https:// and https://www. Does https:// redirect to https://www.? Or vice versa? If not, there’s a problem and you need one of these to redirect to the other.

It doesn’t matter if you pick https:// or https://www. as the primary version of your website. Just pick one.

On to the next part..

You want to make sure there isn’t a chain redirect.

For example, in order to get to https://www.yourwebsite.com, you don’t want to have to go through:

yourwebsite.com then

http://www.yourwebsite.com, then

https://www.yourwebsite.com

This is called a chain redirect. It should be clean and simple.

Use the Serworx tool to see if pages are redirecting correctly. You want there to be a 301 redirect to the correct protocol and not any other status such as a 302.

A 301 is a permanent redirect and a 302 is a temporary redirect.

Put different types of pages into the Serworx, such as the home, category, product, service page etc. And see if there’s a chain redirect.

When you put your site into the tool, don’t include any protocol version, just put yourwebsite.com.

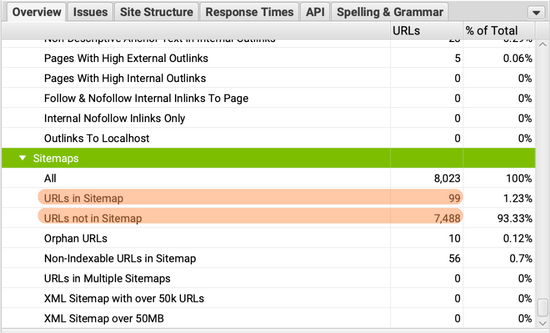

4. URL in sitemap VS indexed in Google

What is this and what does it matter?

The URLs in your sitemap must be the URLs you want indexed by Google. Search engines view your sitemap as exactly that, a map. It’s telling them exactly where to go and what you want indexed.

When you have more URLs in your sitemap and Google doesn’t have as many indexes (or vice versa) that’s odd.

For example, you have 2,000 URLs in your sitemap but Google has 1,800 pages indexed. Or you’ve got 2,000 URLs in the sitemap but Google has indexed 3,000 URLs. This must be looked into.

It’s OK to have some disparity such as 10,000 pages indexed and 9,800 in the sitemap. That’s normal.

However, if the disparity is large such as 10,000 pages indexed but 5,000 in the sitemap. That’s odd.

Recommended tool

Screaming Frog

How to

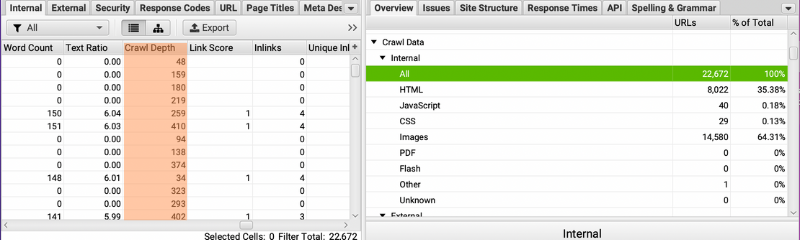

- Run Screaming Frog as mentioned above.

- On the right, click on the ‘Overview’ tab, go to ‘URLs in Sitemap’. Here you can see the number of URLs in the sitemap.

- Put your website into the site: search operator in Google. For example, site:mywebsite.com and you can see how many URLs Google has indexed looking at the number next to ‘About’.

If there’s a large disparity, in SF, click on ‘sitemaps’ > ‘Non-indexable URLs in Sitemap’ and you’ll be able to view pages which shouldn’t be indexed,

Go through your sitemap and indexed results to see if there are pages which shouldn’t or should have been indexed.

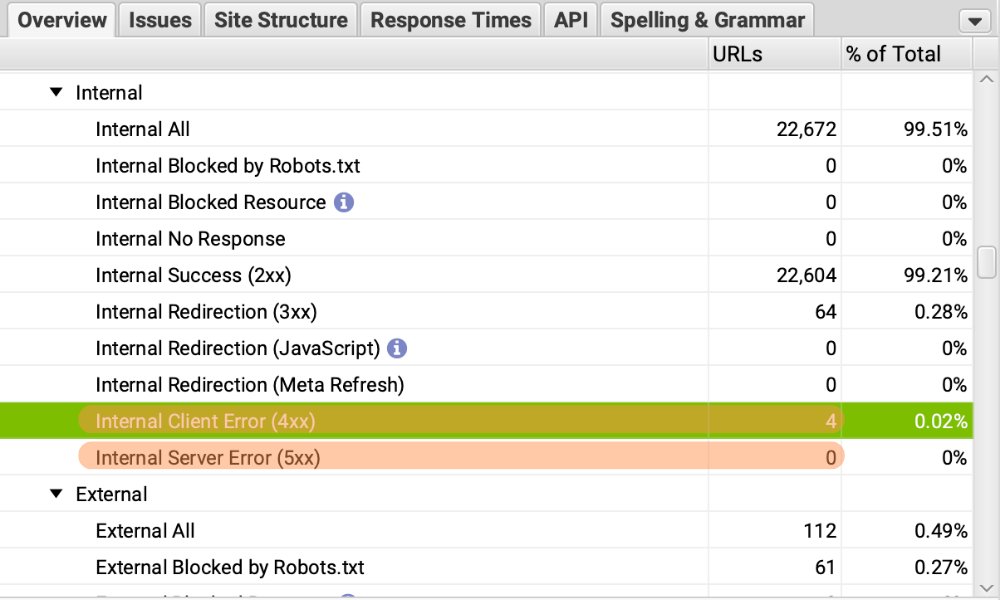

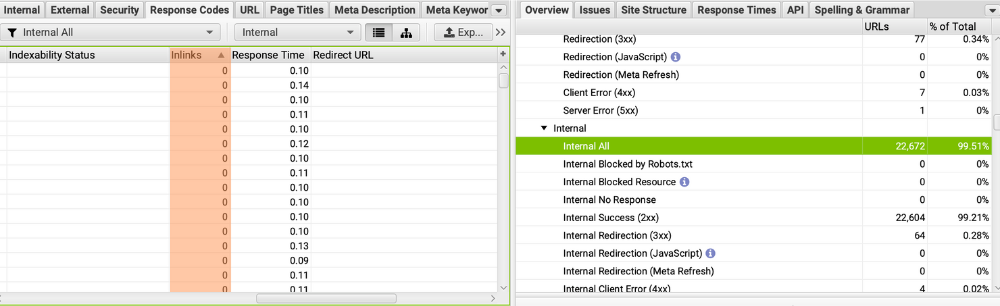

5. 404 errors/Server error

What is this and why does it matter?

A 404 error is a broken page, it does not work, it may have been deleted. A server error (5xx) is an error caused on the server side. If a user lands on one of these pages, it’s bad because they’re not getting the content they expected.

Recommended tool

Screaming Frog

How to

- Run your website through Screaming Frog.

- After the crawl has finished, on the right scroll down to ‘Internal Client Error (4xx)’ and ‘Internal Client Error (5xx) ,

- Click on the ‘Export’ button and you can redirect these broken pages to pages that work or restore the pages.

6. Breadcrumbs

What are breadcrumbs and why do they matter?

Breadcrumbs are a navigational part of a website. It’s a small navigation feature which should appear above the H1 tag. It leaves a trail where the user came through to land on the current page.

For example, let’s say you’re on the Spiderman action figures page. In order to get to that page, you had to go through the ‘home page’, ‘Action figures page’ and the ‘Spiderman action figures page’.

Each of the pages should have a link.

It helps both the user and crawler navigate your website. Breadcrumbs also create internal links which have SEO benefits.

Recommended tool

Your brain! (This is a manual process)

How to

- Make a list of the types of pages you have e.g. category, product, service pages, blog posts etc.

- Then check at least 3 of each type of page and make sure they’ve got breadcrumbs along with links to each page.

That’s it!

7. Crawl depth

What is crawl depth and why does it matter?

Crawl depth is how many clicks it takes to get to a page. Too many clicks to get to a certain page is a bad SEO practice because the user will spend more time finding the page they want. As well as the search engine spider.

All pages on your site should be no more than 3 clicks away.

Recommended tool

Screaming Frog

How to

- Put your website through Screaming Frog and run the crawl.

- After the crawl has finished, click on the ‘Internal’ tab and scroll to the right to ‘Crawl Depth’

- Click on ‘Crawl Depth’ to see which pages have a click depth above 3. You can also export this if you want to work through it in a spreadsheet.

- If there are pages with a crawl depth above 3, are they important? If not, you don’t need to worry about them.

How to fix crawl depth

- Restructure the website. This can be done by consolidating pages and adding new page types and categories to the navigation menus.

- Ensure you have submitted your XML sitemap to Google Search Console.

- Increasing the length of content of paginated pages. For example, include more products or blog posts in the categories.

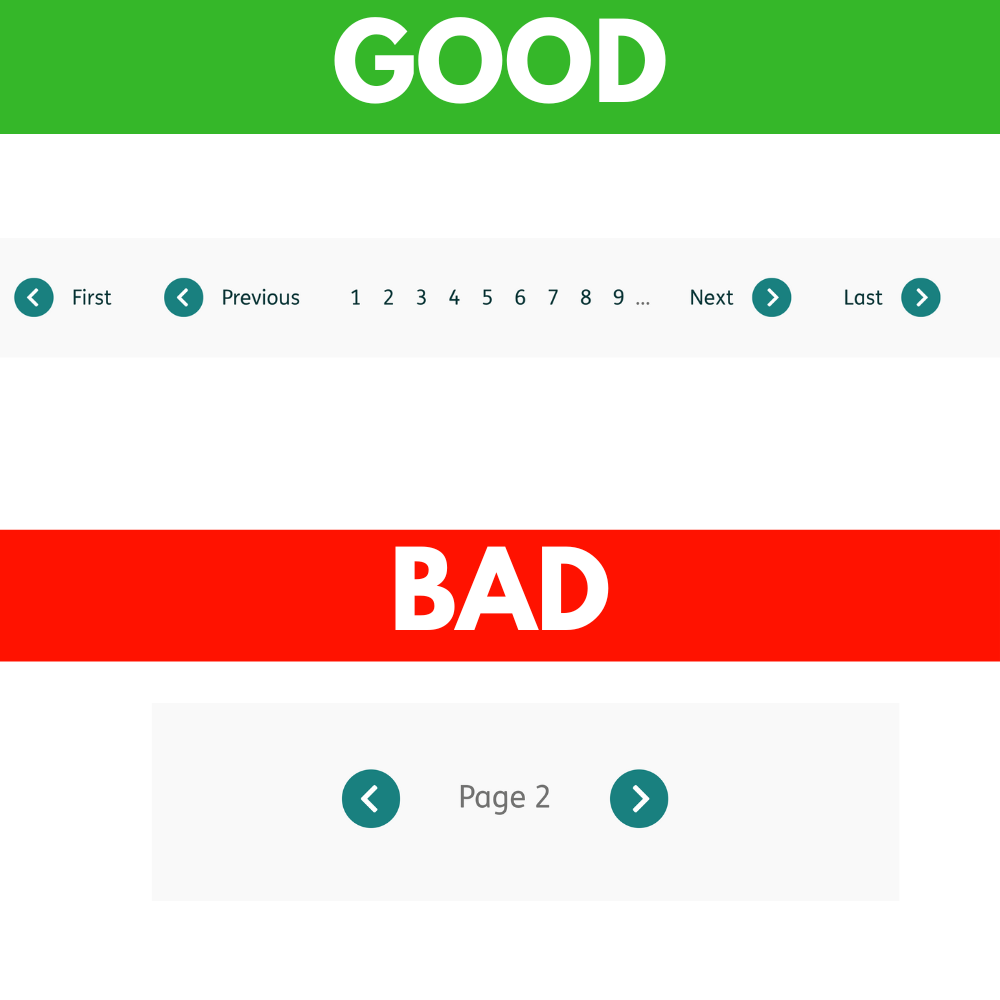

- In the category pages, make sure there’s a link to the root page as well as the last page and a link to the next and previous page. (this means no matter what page you’re on, there’s a link to page 1, to the last page and a link to the next and previous page)

8. Pagination indexability and canonicalising to the root

Ok, so I know this sounds complicated but stick with me here and you will learn a very valuable SEO technique you don’t see on other websites.

What is this and why is it important?

Pagination is a list of content split into multiple pages. For example, let’s say you’re browsing shoes on a website. And for the shoes page there is a page 1, page 2, page 3 etc. That’s pagination.

Another example would be a blog. You’re exploring a blog and you see links to page 1, 2, 3, 4, 5 etc. Pagination is splitting content across multiple pages. You see it all the time.

Now, what does canonicalising to the root mean?

When a page has a canonical tag linking to a different page, it’s telling Google the current page is NOT the primary page. And the link within the canonical is the primary page and that page should be indexed and ranked.

For example:

Yourwebsite.com/a has a canonical link to Yourwebsite.com/b.

Yourwebsite.com/a won’t be indexed and shown in the search engine because there is a canonical link pointing to Yourwebsite.com/b.

Now, with a paginated page e.g. /shop/page/2/ or /shop/page/7/. Do these pages link to the root which would be /shop/ ?

If there is a canonical to the root page, this is a bad practice as it’s preventing the paginated pages from ranking. Paginated pages should have a self referencing canonical tag.

For example, yourwebsite.com/shop/page/2/ should have a canonical tag which links to itself, yourwebsite/shop/page/2/.

Google themselves have stated that you should not canonicalise your paginated pages to the root. Instead, paginated pages should have a self referencing canonical. Google’s official pagination guide.

Should paginated pages rank?

There are different opinions on whether paginated pages should rank on Google. Some SEOs say only the root page should rank, others say canonical pages should also be given the chance to rank.

I’m of the opinion of the latter. Paginated pages should be given the chance to rank, here’s why…

With only the root page being allowed to index and rank, that’s one string in the water. With multiple pages being allowed to index and rank (paginated pages) now there are more strings in the water. Increasing the odds of your page ranking.

I and probably you, have seen paginated pages rank. But why wasn’t the root page ranking? Because Google preferred a certain paginated page.

Maybe it’s because certain products were showing on that page and that’s why Google decided to rank it over the root page. You could never have known this if you only allowed the root page to index.

Does Google see paginated pages as duplicate content?

Google does not see paginated pages as duplicate, they do a great job at distinguishing each paginated page. There is no need for you to change the titles and descriptions.

NOTE: When running your website through various tools, they may flag the paginated pages as duplicate. You can ignore this.

Recommended tool

Your brain! (Manual process)

How to

- Go to your pages which have pagination (Product categories, blog roll etc.)

- On a paginated page e.g. /blog/page2 and right click, click ‘View Page Source’.

- Press Ctrl/command and F on your keyboard and paste in <link rel=”canonical” href=

- There should be a self referencing canonical but if it links to the root page, it’s a fail.

Pagination best practices

- Link pages logically – Page 1 should link to page 2, page 2 should link to page 3, page 3 should link to page 4 etc. And from each paginated page, make sure there is a link back to the root page. Use the <a href> tag for this.

- Make sure each page has a unique URL – yourwebsite.com/shop, yourwebsite.com/shop/page2, yourwebsite.com/shop/page3 etc.

- Give it page a self referencing canonical.

Pagination bad practices

- Doing nothing – Doing nothing is a way to not be in control of your website.

- Canonicalising to the root page – Having a canonical link on your paginated pages to your root page reduces the chances of your pages ranking.

- Infinite scroll – You might be thinking infinite scroll will fix all your pagination errors. Unfortunately, it’s not that simple.

JavaScript SEO Checks

What is JavaScript SEO and why does it matter?

JavaScript is a popular programming language used on the web. Chances are 99.9% of the website you use has JavaScript loading.

Search engines like Google do not treat JavaScript the same they do as HTML.

You see, when Google lands on your website, it doesn’t see it the same way a user sees it. It’s all written in code.

When a Google crawler lands on your page, it ONLY reads/renders the HTML version. It LATER comes back to that page to render the JavaScript part of it.

WHY DOES GOOGLE INITIALLY ONLY READ THE HTML VERSION OF THE PAGE?

It all comes down to resources. Google’s resources to go out and crawl and render websites are far smaller than you think.

To get a grasp of JavaScript SEO and take your SEO skills to the next level, you NEED to understand how websites work.

Here’s how… this part is a bit daunting but it’s well worth going over a few times until you understand it…

How HTTP requests work

- When you click on a page, your browser (Google, Safari, Firefox etc.) requests that page’s content from the server it’s hosted on.

- If that request was successful, the server will send the HTML document of the page you clicked on. This HTML document will include things like the HTML along with CSS, JavaScript and images if they’re in the document. If they are, your browser will send separate requests for these files.

JavaScript execution

After the server of the page you clicked on has sent your browser these files, your browser will construct the DOM and render the page.

DOM stands for Document Object Model:

- The Document is the webpage.

- The Object is all of the elements on the page ( <head></head>, <title></title>, ,<h1></h1>, <body></body>, <page></page> etc. )

- The Model is the order in the document (The <title> belongs in the <head>).

When your browser starts constructing the DOM and rendering the page, the JavaScript will modify the DOM. This modification can be small such as loading in chat support or the modification could be huge like loading the entire page’s content.

Loading in the Javascript is highly taxing on your browser and can add seconds onto the loading time.

When your browser is doing the loading, this is called client side rendering. That’s an important word, it will come up a lot in your SEO career.

Here’s the problem

When search engines load in JavaScript, it’s super resource heavy. That’s why Google renders a page in 2 stages. First the html and then the JavaScript.

It can cost Google 100x more to load in the JavaScript over the HTML. Now let’s say you have 50,000 pages, that’s a heck of a lot of work for Google and search engines as a whole to do.

With JavaScript heavy websites, it’s going to take a massive amount of time for Google to go through them and get them ranking. And keep in mind Google re-crawls pages to see if changes have been made.

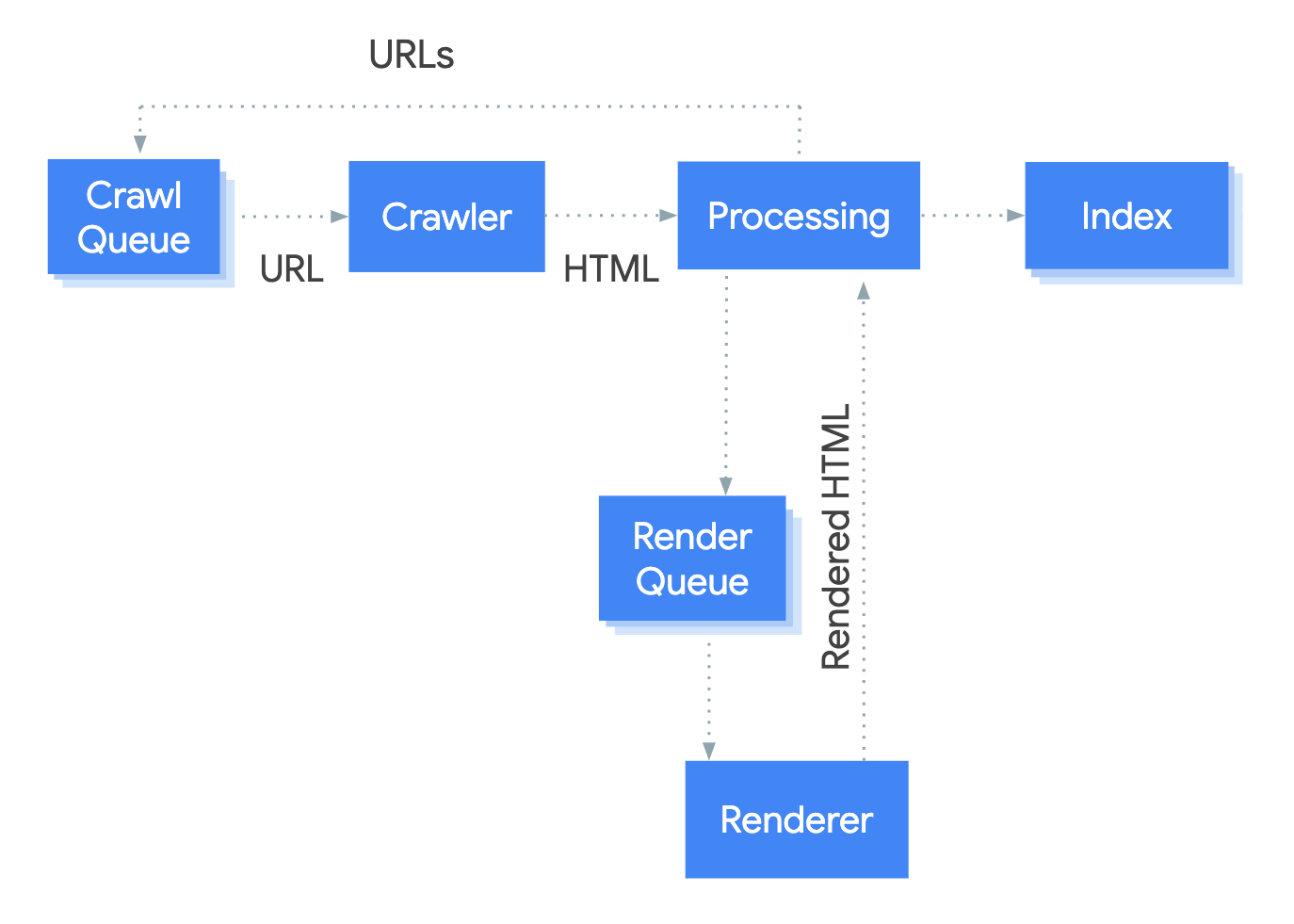

Crawl Queue – Keeps records of all URLs which are yet to be crawled and this is kept updated by Google.

Crawler – The crawler is the Google bot which receives the URLs from the Crawler Queue and the Crawler Requests the HTML of the website.

Processing – This is where the HTML is evaluated.

(A) The crawler bot will receive the URLs from the crawler and see if they need to be indexed. For example, if there’s a noindex tag on the page, then it won’t be rendered or indexed. The crawler will keep checking these types of URLs in case the page has changed to index.

(B) Based on the dependence of the pages JavaScript, the need for rendering is evaluated. It is then sent to the Render Queue. Google will use the HTML response whilst the rendering of the page is going on.

(C) URLs/Pages are then canonicalized to see if the page has priority over another page on your website. (This kind of canonicalization is more than having a canonical link element on your page. Canonical signs such as links and sitemaps are taken into consideration as well).

Render Queue – Keeps a record of URLs that are to be rendered and it’s constantly being updated.

Renderer – is the web renderer which receives URLs that need to be rendered, it renders them and sends the rendered page for further processing.

Index – The content is assessed to evaluate the metrics it uses to rank a page and calculates the scores.

Ranking – The algorithm takes information from the index and tries to rank the page where it believes it should rank on the search engine’s results page.

Now you know how the Google search engine works when it comes to processing pages.

Best practices

Keep rendering to a minimum – Don’t use up a bunch of resources for search engines, they don’t like it because it costs time and money. And it will cost you time and money.

- Keep JavaScript to a minimum.

- Minify the code.

- Shrink image sizes.

- If you can use HTML or CSS over JavaScript, then do so.

Make sure critical content is within the initial html response – Remember, your site is crawled in 2 stages, first, the initial html response and then the rest of the page files. Make sure your meta data is optimised and the html of the body. Include your keywords in these areas.

Tabbed content – Tabbed content is typically included in the second crawl and not the first because the search engine can’t see it. If it requires JavaScript to see the content, it’s a fail.

Make sure the navigation is in the HTML response – This is a must for any site on the web. Make sure the sidebar along with the footer and pagination is also within the HTML response.

If you’re using infinite scroll, this is a bad idea as Google cannot render it. Instead, it’s best practice to have a numbered outline with each page linking back to the first.

Here’s what you must avoid and do instead, since it means Google has to render the page to find the link:

Avoid this:

<a onclick=”goto(‘https://example.com/sofas/’)”>

Instead, do this:

<a href=”https://example.com/sofas/”>

All pages must have a unique URL – Majority of the time, search engines will not index fragmented pages. These are pages with a hash symbol. For example

https://example.com#sofas.

Canonical links must be within the initial response – Google’s John Muller has mentioned that canonical tags must be within the initial fetch and non-rendered version or Google will be doing guess work.

Don’t use JavaScript to inject canonical tags, use the html.

rel=”nofollow” links must be within the initial response – It’s the same with no-follow links as it is with canonicals. They must appear within the initial response and not the rendered version for them to be correctly interpreted.

Other link types – these links must also be within the initial response for them to be correctly interpreted and applied:

link rel=”amphtml” attribute

link rel=”alternate” hreflang attribute

link rel=”prev”/”next” attribute

link rel=”alternate” mobile attribute

Allow JavaScript files to be accessed

Do not let JavaScript files be blocked by the robots.txt or noindex. They must be accessed in order for search engines to understand your website.

Remove render-blocking JavaScript

When you put your website/page through Google’s PageSpeed Insights tool, you’ll probably see render-blocking JavaScript.

This is code that slows down the loading of your website and when search engines are crawling and rendering your website, it’s going to slow them down also. This is negative for both users and search engines alike. Two entities your site relies on.

Take advantage of lazy loading your media and use inline JavaScript to take a chunk off the load time.

When implementing lazy loading, use html over the JavaScript. Here’s an example of what the tag would look like:

<img src=”/images/dog.png” loading=”lazy” alt=”White dog” width=”300″ height=”300″>

By lazy loading through html, search engines can get images from the initial response instead of having to go through the rendering process.

Add a content-hash to your JavaScript file names – Google caches JavaScript at a high rate, this is great if your JavaScript doesn’t change much. But if it does, it’s a problem.

By adding a content-hash to your JavaScript file names, when your JavaScript changes, the hash will be updated and Google knows it will have to do a re-render of it.

Rendering Choices

Client-side rendering

Client side rendering is where your browser is doing all of the heavy lifting. This is a major negative experience for users and search engines do not like this as it takes a long time to load.

The browser and crawler will be waiting a long time to retrieve what’s needed.

Server-side rendering

Server-side rendering is the server rendering the web page before sending it to the client (browser).

Instead of having the client render the page, the web server will do it and then send it to the client.

Both the user and Google bot will be treated the same in the sense that they will get the same rendering process when accessing a page. The server will render it and then give the page to the user or bot.

There’s a big positive and negative to this. This positive is, all the elements the search engines want are available in the first response, however, the JavaScript is executed after the initial response.

The con is that the user or bot will be waiting longer to get the initial response (TTFB).

Dynamic rendering

Dynamic rendering gives a different response to the user or crawler When the user wants a page, it will go through client-side rendering. When the crawler wants a page, it will go through a server-side rendering.

However, if you need something like this, it should only be used as a temporary solution. Google doesn’t see this as cloaking as long as both methods render the exact same content.

This is great for 2 reasons. 1. Everything the search engine needs is available in the first response. 2. It’s easy to implement.

The big negative is it makes debugging a big headache.

What do my rendered pages look like to Google?

- To see what the rendered version of your website looks like, just use Google’s Mobile-Friendly Test. Put a page in there, click on ‘Screenshot’ and take a looksy.

- Yes, the screenshot shows a cut-off version of the page but that doesn’t mean that’s what Google sees. To workaround this, use the URL Inspection Tool in Google Search Console.

- Inside the Google Search console, at the top there is a search bar. Put your page in there and click on the button which says ‘Test Live URL’. Click ‘View Tested Page’, click on the screenshot tab and you’ll see what the rendered version looks like.

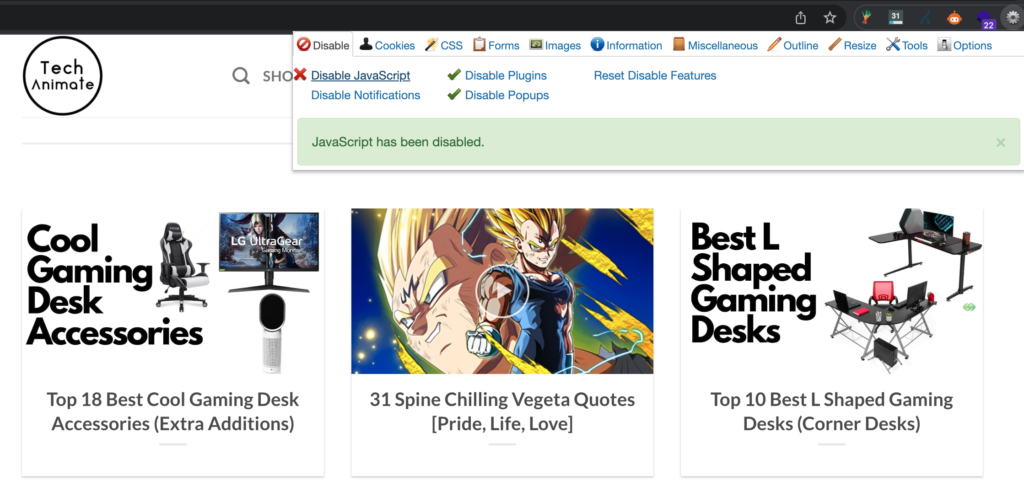

1. How much of the site needs javascript to load the content? Is the site operable?

What is this and why does it matter?

If your website is highly reliant on JavaScript, the rendering will be massively delayed until Google has enough resources to allocate crawling your website.

Find out how much of your website is using JavaScript and if the site is heavily JavaScript reliant, it’s time to speak to your devs and get it changed.

Here’s how to find out how much of your website relies on JavaScript…

Recommended tool

Web Developer browser extension.

How to

Make a list of the types of pages you’re going to go over. You don’t need to go over every page on your website because you may have hundreds or thousands of pages and this is a manual process.

Here are some page types you’re going to want to check:

- Home page

- Product/service page

- Category page

- Blog-roll page

- Blog post

You can add to this list. If you have thousands of products, you can’t check every one with this method.

Here’s how you do this:

- Add the Web Developer browser extension and click on the ‘Disable JavaScript’ option. This will prevent JavaScript from loading on any of your pages.

- Go through each type of page on your website. How much of it loads? Do the links still work? Check the menu links first as they are the most important.

Essentially, what you’re trying to do is use your site as normal with the JavaScript disabled. If you’re not able to operate it as you would with JavaScript disabled, it’s a problem.

There are some things that you won’t be able to load like the live chat feature if your site has one. You don’t need to worry about features like these not loading in when JavaScript is disabled.

Page Speed Checks

Why does page speed matter so much?

- 100ms in Extra Load Time Cost Amazon 1% Revenue.

- Increasing page speed led to a 76% reduction in the abandonment rate. Source

- Google has mentioned a slow loading page increases the bounce rate by 126%. Source

By simply reducing your page speed, you’ll decrease the bounce rate and see an increase in conversions.

1. Minify JS & CSS

What is Minify JS & CSS and why does it matter?

JS and CSS are programming languages. Your browser loads up a website by loading the JS and CSS.

You can reduce the loading time by reducing the amount of code, this is called minifying.

Recommended tool

How to

- Put your each type of page such as the home page, category, product page, blog post etc. into the tool.

- Go to ‘minify CSS’ and minify Javascript’

- If it’s green it’s a pass and red a fail.

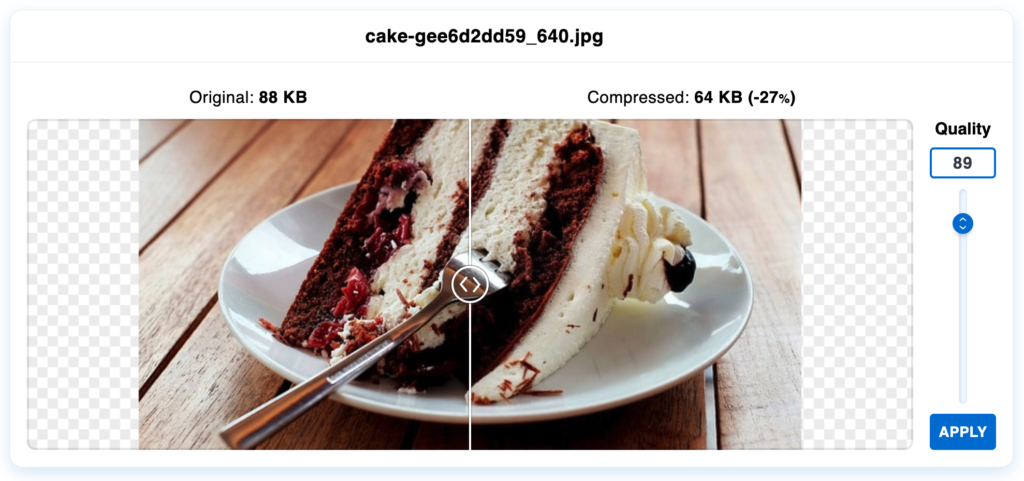

2. Image compression

Why does image compression matter?

Images are a MAJOR reason for slow loading websites. Very few people compress their images before uploading.

When you first start using your new website it will be fast but as time goes on, images will slow it down.

Recommended tool

How to

- Before uploading the image, put it into Optimizilla to compress it. It doesn’t have to be Optimizilla, just a tool that reduces the size. It can be an internal tool within your CMS as well.

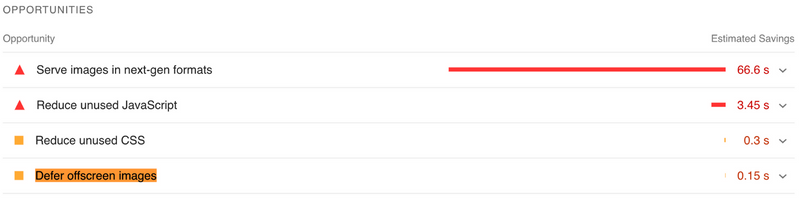

3. Lazy loading images

Lazy loading is an easy way to massively reduce loading times on your website!

Lazy loading is exactly what it sounds like, you’re lazily loading the page.

Wait… what? Wouldn’t that contribute to the slow loading website issue?

No, because the images which are being lazy loaded are not on the screen. But as you scroll, the images will start loading.

This is a simple yet highly, highly effective way to save seconds on your page load time.

Recommended tool

How to

- Put each type of important page into the tool (Home page, category, services, product page etc.

- Press Ctrl/command and F on your keyboard, there will be a box that pops up. Type in ‘Defer offscreen images’. You will be taken to the lazy load metric and see if your site passes the test. You’ll be able to see images that aren’t being lazy loaded.

- To implement lazy loading to your website, you can install a module to your CMS, use a plugin if you’re on WordPress or individually add the lazy load html tag.

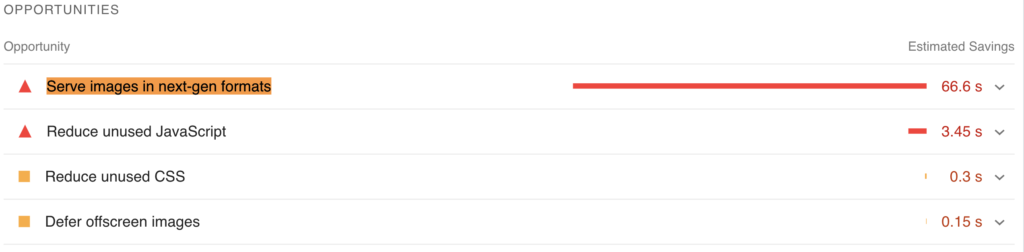

4. Modern image formats

Do you want to compress your image file sizes and still keep your images high quality?

Modern image formats refer to a Webp file. Webp is an image format developed by Google, it was designed to replace jpeg and PNG formats.

By using Webp, you’ll be able to compress files 25% – 34% further compared to the traditional jpeg file.

This should be the standard format for every website.

Recommended tool

How to

- Put each important type of page into the tool (home page, category, product, service pages etc.)

- Find ‘Serve images in next-gen formats’ using Ctrl/command and F.

- This will show you which images are not using Webp.

5. Use a CDN

What is a CDN and why it’s important

CDN stands for Content Delivery Network. A CDN will host your website all around the world. It’s a way to give your website a big page boost for international users.

Let’s say your web server is hosted in the US and people from the UK are using your website. Your website will be loading very slowly in the UK.

However, if you’ve got a CDN in the UK, your website will load quickly for UK users too.

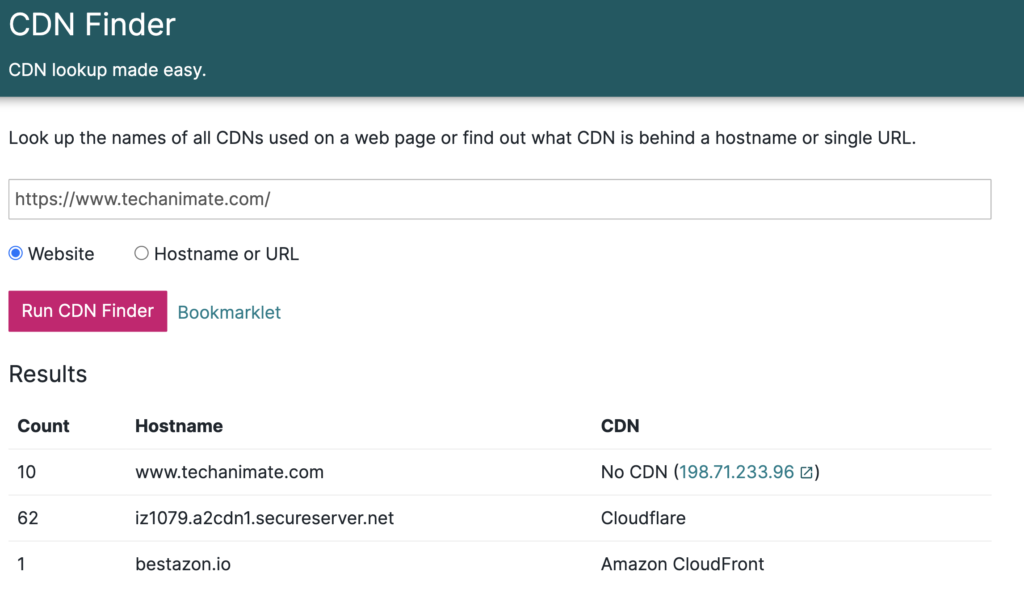

Recommended tool

How to

- Put your website into CDN finder and click ‘Run CDN Finder’

- If it appears as ‘Fail’ or ‘Unknown’ your website does not have a CDN.

You can speak to your host about setting up a CDN.

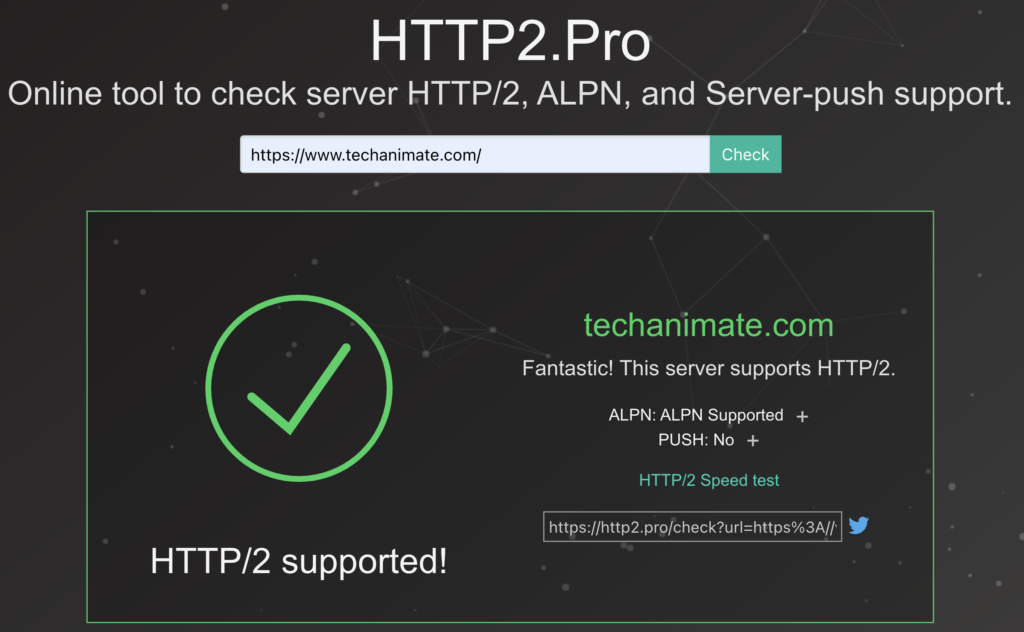

6. HTTP/2

What is HTTP/2 and why does it matter?

HTTP/2 is an improvement over HTTP 1. In a nutshell, it helps your website load faster by sending multiple waves of data without blocking any resource.

You don’t need to understand the exact technicalities unless you’re a developer. Or at least I wouldn’t worry about it.

Recommended tool

How to

- Put your website into Http2 Pro and if you get the tick sign it’s a pass.

7. Are images the correct pixel size for the space they fill?

Oftentimes pages are displaying images which go over the pixel size for the box it fills.

For example, the image is displayed on the screen as 500×500 but the browser is rendering it as 1920×1080. That’s a waste of resources because the image doesn’t need to fit that large of a container.

You can slash a chunk out of your loading time by fixing this.

Recommended tool

How to

- Put each type of page such as the category, service, product page, blog post etc. into the tool.

- Go to ‘Image elements do not have explicit width and height’, if it’s red then it’s a fail.

Content Checks

This section is all about the actual content of your website. Internal links, words you’ve written and duplicate content is what we’ll be going over in this section.

You can completely transform how much traffic your website receives by making a few tweaks to the content. This section covers easy wins for your website.

1. Orphaned pages

What are orphaned pages and why does it matter?

Orphaned pages are pages without any internal links. They can only be found and indexed via the sitemap. It’s difficult for users to get to these pages as well as the search engine.

Without internal links, your pages will struggle to rank. You may not be bothered about fixing all orphan pages due to their role and significance. However, if there are pages you want to rank which are orphaned, you need a list of these and get to work.

Recommended tool

Screaming Frog

How to

- Crawl your website with SF.

- Under ‘internal’ click on ‘All’. Scroll to the right until you see ‘Inlinks’. These are the pages without internal links pointing to them.

- Click ‘Export’ and in the sheet, you’ll be able to see the ‘inlinks’ tab.

- Prioritise the pages you want to rank and find pages related to them so you can link to those orphaned pages.

Now that you have a list of pages with 0 internal links, you can start shooting links at them.

Let’s say you’ve got an orphan page called, ‘Top 10 protein powders for teens’. Find other pages that’s related to protein and teenagers and link to the orphaned page.

Use the site search function:

site:yourwebsite.com YourArticleTitle

With this, you’ll be linking to a relevant page from a relevant page, increasing your page authority.

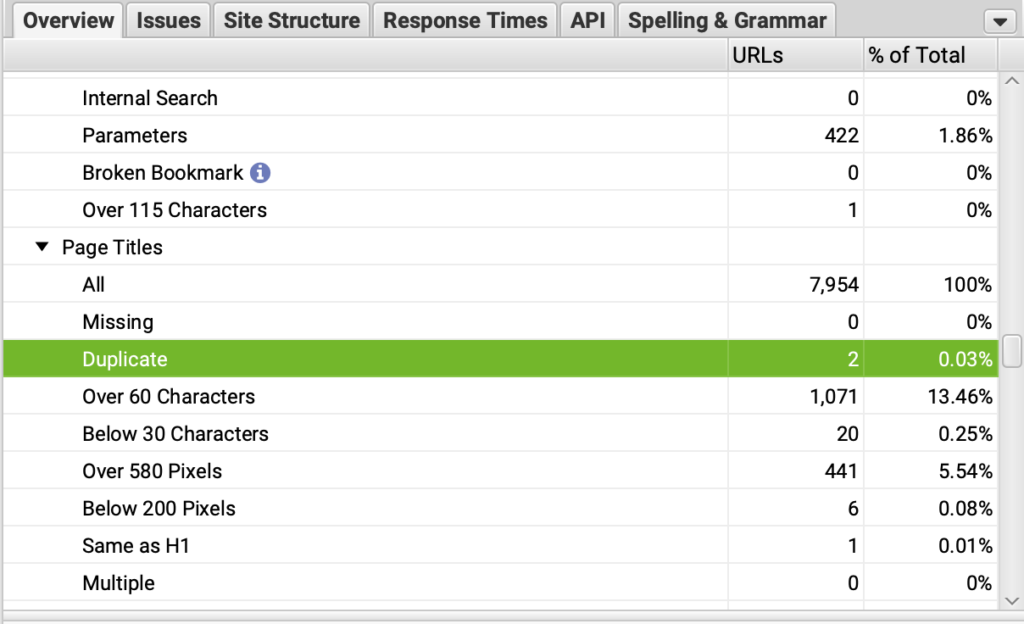

2. Duplicate meta titles

What are duplicate meta titles and why does it matter?

The meta title is the blue title you see when you do a Google search. A duplicate meta title is having more than one page with that exact meta title.

Duplicate meta titles are very bad for SEO because the meta title is one of the strongest on page signals. If you’ve got 2 pages with the meta title, ‘How to train your dog’, Google may not know which one is the primary version and as a result, none of them will rank.

This is called keyword cannibalization.

Recommended tool

Screaming Frog.

How to

- Put your domain into Screaming Frog and run the crawl.

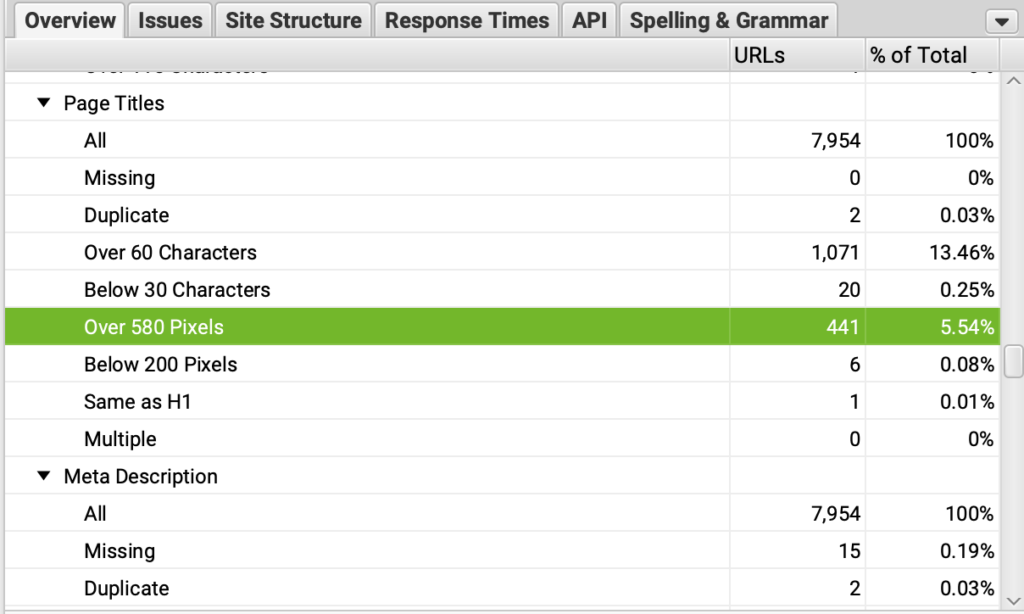

- In the box on the right, scroll down to ‘Page Titles’ and you’ll be able to see the number of duplicate meta titles under ‘Duplicate’.

- On the left, click on the ‘Page Titles’ tab. There will be a box under it, click on it and click ‘Duplicate’.

- Click on ‘Export’ and you’ll be able to see all the duplicate meta titles within the sheet.

3. Meta titles over 580 pixels

What are meta titles over 580 pixels and why does it matter?

When the meta title goes over 580 pixels, the title is cut off and sometimes can be rewritten by the search engine. This is bad because it makes the meta title less appealing, especially if Google decides to reword the title.

People often think meta titles cut off after 60 characters. This is false, meta titles cut off after 580 pixels. You can have less than 60 characters and the meta titles will be cut off.

Recommended tool

Screaming Frog.

How to

- Put your domain into Screaming Frog and run the crawl.

- Click on ‘Page Titles’, > ‘Over 580 Pixels’.

- Click on ‘Export’ and you’ll be able to see all of the meta titles over 580 pixels.

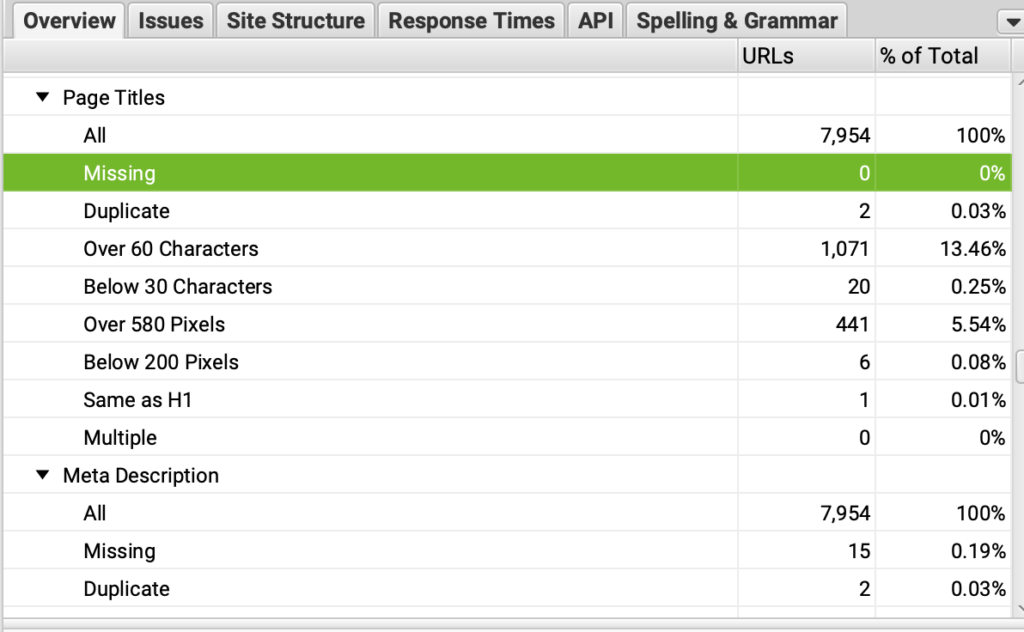

4. Missing Meta titles

What are missing meta titles and why does it matter?

Missing meta titles is exactly what it sounds like. It’s meta titles that don’t exist. If you don’t set a meta title, Google will take it from your H1 or generate the title themselves.

To avoid confusing the search engine, you should have a unique meta title for each page you intend to rank. That meta title should be the same as the H1 title.

Recommended tool

Screaming Frog

How to

- Put your domain into Screaming Frog and run the crawl.

- Click on ‘Page Titles’ and click ‘Missing’.

- Click ‘Export’ and you’ll be able to see the missing titles.

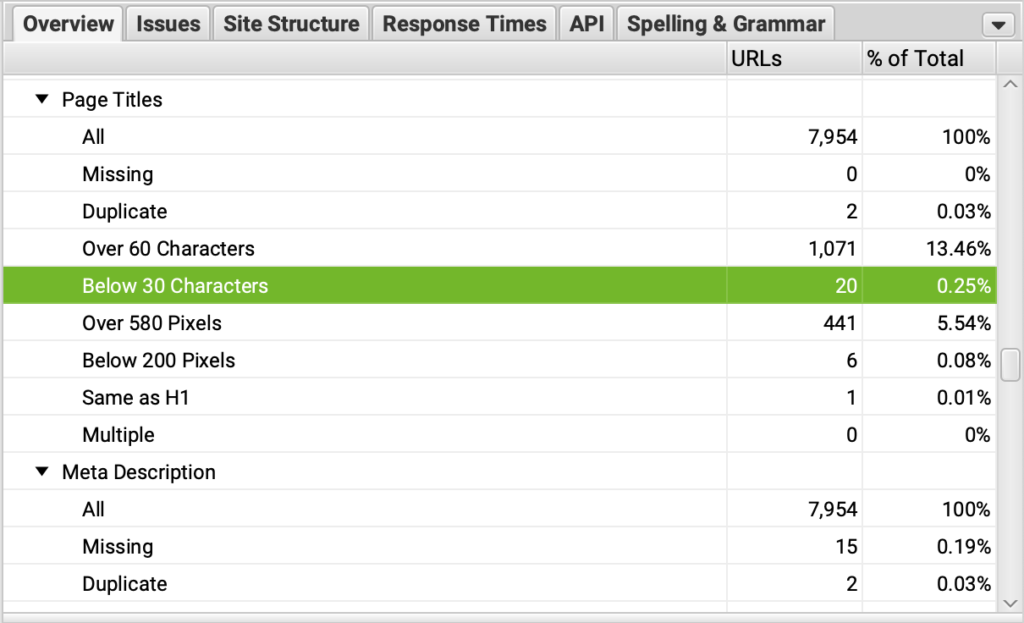

5. Meta titles under 30 characters

What are meta titles under 30 characters and why does it matter?

Meta titles under 30 characters are titles that aren’t taking up all the characters. It’s best to get in as many characters as possible to gain as much space on the SERPs as you can.

The meta title has the most impactful role when it comes to on page SEO. That’s why you should not let any of it go to waste and try to fit as many keywords in there as long as it makes sense.

Recommended tool

Screaming Frog.

How to

- Put your domain into Screaming Frog

- Click on ‘Page Title’, > ‘Below 30 Characters’.

- Now export the data by clicking the ‘Export’ button.

This data can reveal pages that aren’t taking advantage of inserting keywords in the meta title.

Work your way through the exported sheet and make sure to optimise the most important pages first.

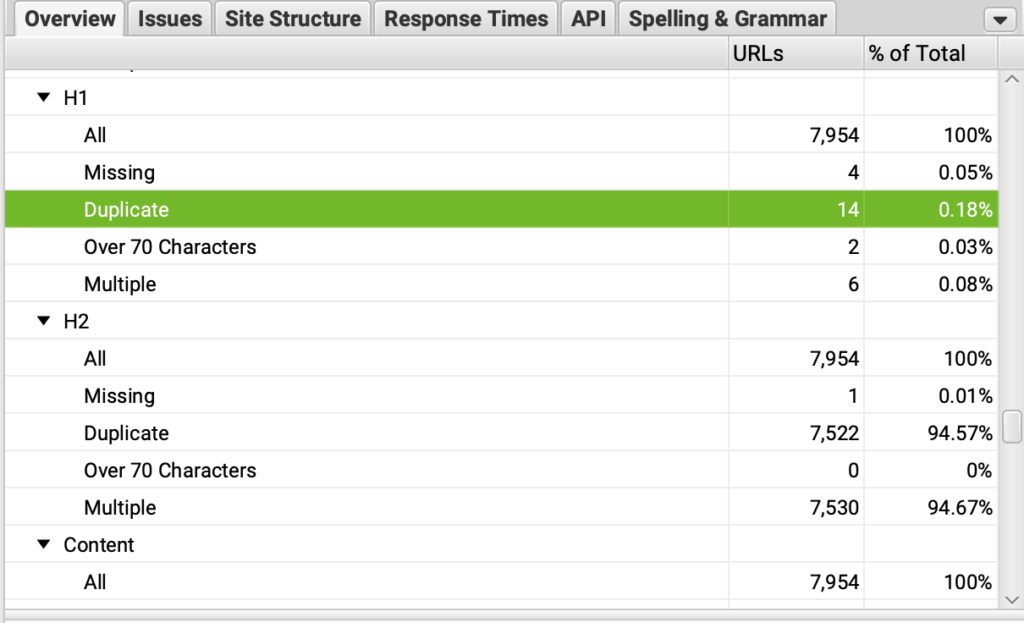

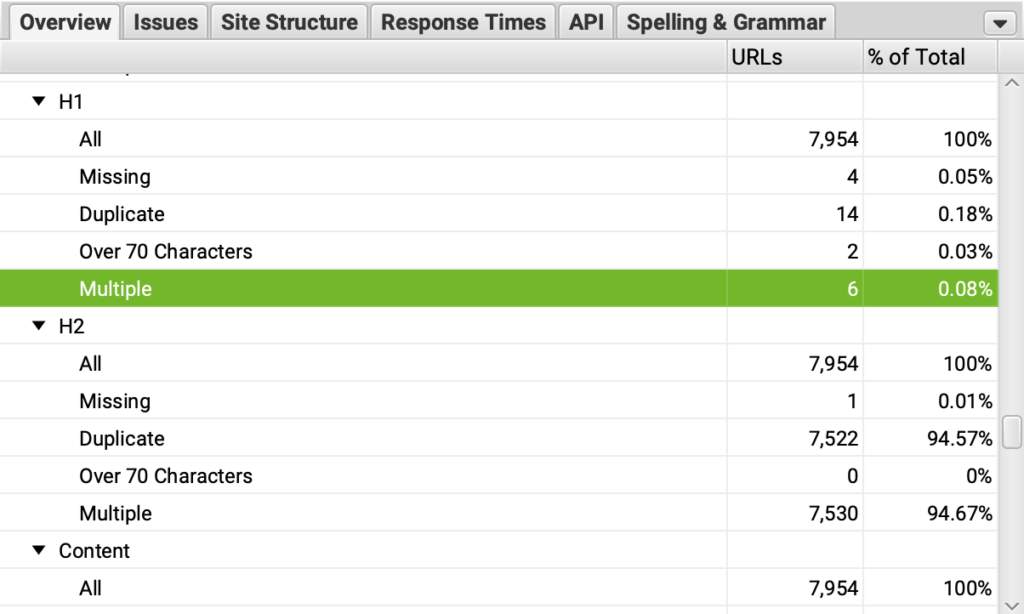

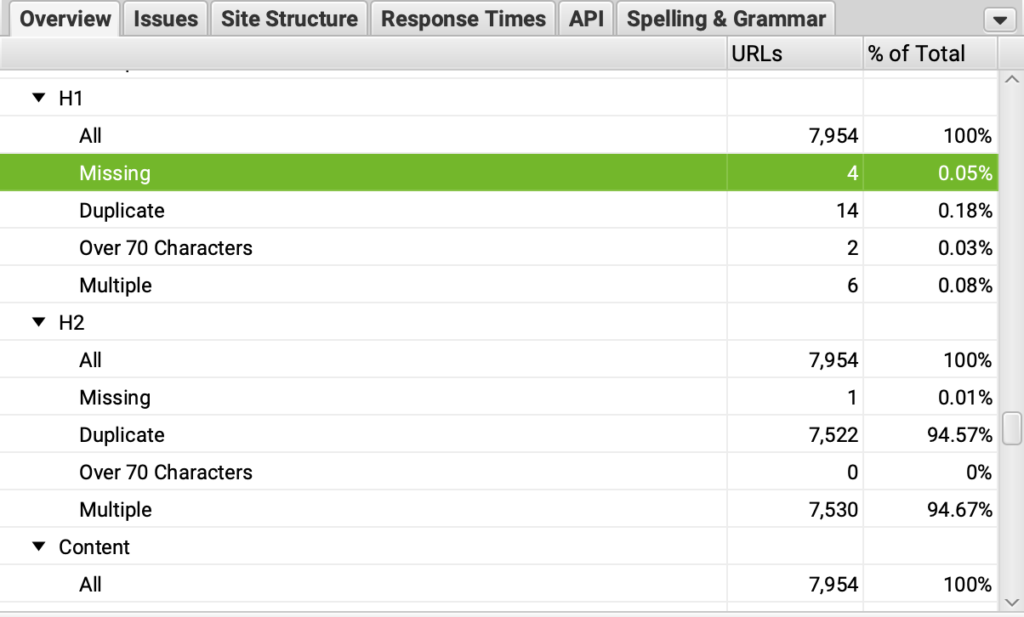

6. Duplicate H1 titles

Duplicate H1 titles are H1 titles that appear on more than 1 page. This can confuse search engines to which page should be seen as the primary version. This is because the H1 is given a lot of ranking power by the search engine so it tells the search engine what the page is about.

By having duplicate H1 titles, it causes cannibalization.

Recommended tool

Screaming Frog.

How to

- Put your website into Screaming Frog

- Click on ‘H1’ > ‘Duplicate’.

- Click on the ‘Export’ button. In the exported sheet, the duplicates are under the ‘Occurrences’ tab.

7. Multiple H1s per page

There should only be a single H1 per page. Having multiple H1 tags on your page is a bad practice. Think of the H1 as the title of a book, it wouldn’t make sense if the same book had multiple titles.

Recommended tool

Screaming Frog.

How to

- Put your website into Screaming Frog.

- Click on the ‘H1’ > ‘Multiple’.

- Click on ‘Export’ and in the sheet you’ll see the data under the ‘H1-2’ column to see the issues.

8. Pages with missing H1s

H1s are one of the biggest on page ranking factors. It’s important to have a H1 tag with your primary keyword in for every page you want to rank.

Recommended tool

Screaming Frog.

How to

- Crawl your website with Screaming Frog.

- Click on ‘H1’ >‘Missing Filter’

- Click the ‘Export’ button and in the exported sheet, you’ll be able to see all the URLs with missing H1 tags.

9. Is there evidence of keyword stuffing?

Keyword stuffing is blatantly placing keywords on the page to the point it sounds unnatural. Leading to the web page being over optimised and your ranking suffering as a result.

Example:

“Here’s how you bake chocolate chip cookies. First to make the chocolate chip cookies you must put all the ingredients for the chocolate chip cookies together. The ingredients for the chocolate chip cookies are as follows. The first chocolate chip cookie ingredient is…”

Is obvious that ‘chocolate chip cookies’ has been used too many times.

If you purchased the website from someone, they may have implemented bad SEO practices such as keyword stuffing. If it’s a client, a previous agency may have implemented keyword stuffing.

Recommended tool

Your brain! (Manual work, no tool this time)

How to

You don’t need to check every page as manually checking thousands of pages is not possible. Instead, explore each type of page, this can include:

- Blog post

- Category page

- Product page

- Service page

You can add more types of pages but these are the main ones. Have a read of a few pages for each of these types of pages.

If there’s no sign of keyword stuffing, it’s a pass, if you spot keyword stuffing, make a note of these pages.

They may need to be rewritten altogether or just tweaked. Having trained writers to find and solve this issue will be of great use.

10. Are keywords within the body?

The body is a html tag and it’s where most of the page’s content goes. Having your keyword in the body appear at least once is good practice. It helps signal to the search engine what your page is about.

You can take it a step further by placing the keyword within the first 100 words of the body to give the search engine a slightly stronger signal to what the page is about.

Recommended tool

Your gigabrain!

How to

Make a list of each type of page you have, this can include:

- Category pages

- Product pages

- Blog posts

- Etc.

Hit control+F or Command+F if you’re on Mac. Type in your target keyword, and if you see your target keyword it’s a pass, if not, it’s a fail.

If you find that most of the pages you check do not have the target keyword in the body, flag this as something for you to fix. And when you do come around to fixing this issue, make sure you prioritise the most important pages.

The most important pages are:

- Pages with the most traffic

- Pages with the most potential traffic

- Pages with the most links (internal and external)

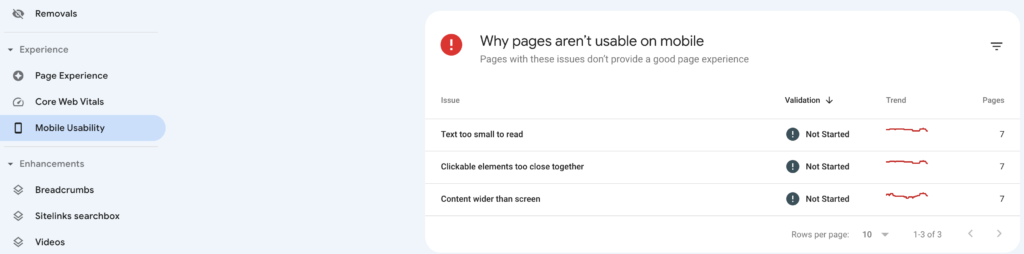

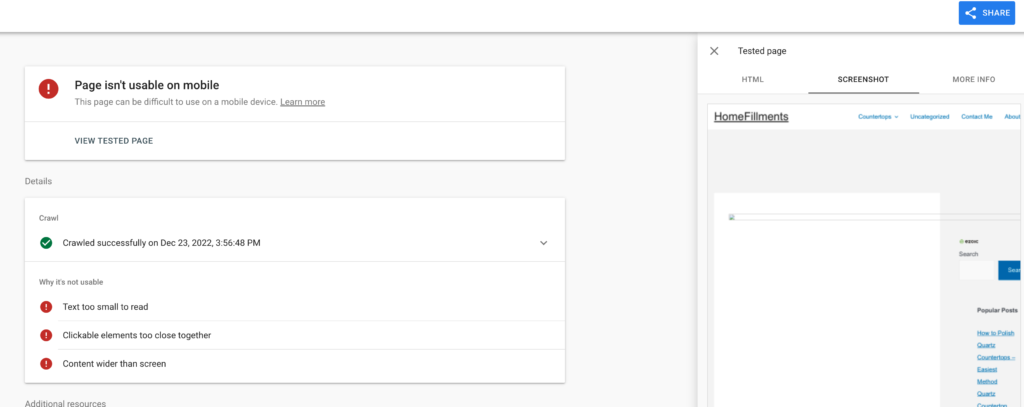

11. Are fonts large enough to read clearly?

Fonts must be large enough to read or else it will be hard for users to read and search engines will also assess the font size. And your rankings will be negatively impacted if they are large enough.

Yes, Google can also detect fonts that are too small.

Recommended tool

Google Search Console.

Manual review.

How to

- Go into Google Search Console

- Click on ‘Mobile Usability’

- If there are issues with the font, it will appear as ‘Text too small to read’.

- Click on ‘Text too small to read’ and the URLs will appear.

- Click on a URL and ‘TEST LIVE PAGE’ and you’ll be taken to Google’s Mobile Friendly Test.

- After the test has finished, click on ‘VIEW TESTED PAGE’ and click on SCREENSHOT. Here you can see what your page looks like.

Work your way through these pages.

12. Are hyperlinks clear?

Hyperlinks are links that reference another page. These hyperlinks are there to lead the user to helpful content and understand the topic better. These hyperlinks should not be hidden and should be clear to see.

Recommended tool

Manual review.

How to

It’s simple, just find a link within the body of the page, is the link clear? If not, change the colour.

13. Is the font colour too light?

Sometimes a user will strain their eye because they’re having to squint when reading content. It’s your job to make sure everything on your page is served to the user on a silver platter.

Make reading your content as pleasant as possible and font colour plays a role in this.

Recommended tool

Manual review.

How to

It’s straightforward, exploring different pages on the website. Blog posts, product pages, categories etc. Ask yourself, ‘is the font too light?’. Ask other people too to get a better perspective.

14. Are the heading structures logical?

This one gets missed by a LOT of people.

The H1 is the title of the page, there shouldn’t be a H2 title above it because it doesn’t make structural sense. If you’ve got a H2, H3 on the page and decide to use a H5, can you use a H4 instead?

Example of a structure that’s illogical:

<H1></H1>

<H3></H3>

<H2></H2>

<H5></H5>

<H1></H1>

In the above, there are multiple H1s. Second, there’s a H3 above the H2 when it should be the other way around. It’s OK to have a H3 above a H2 as long as there are H2(s) already above the H3.

Example of a logical structure:

H1

H2

H2

H3

H4

H4

H4

H4

H3

H4

H4

H2

H3

H2

H2

H2

As you can see, this page doesn’t have a muddled structure. It’s clean and tags are ordered.

Recommended tool

Detail SEO Extension (Chrome Extension)

How to

- Click on the Detailed SEO Extension and click on ‘headings’

- Here, you will see what the headings are

- Check each type of page such as blog posts, categories, product pages etc. to see if it’s following a logical structure.

Oftentimes you will find that the breadcrumbs appear as a H2 above the title of the page which is a H1.

Fix this having the breadcrumb as normal text instead of a heading.

15. Is the search intent right?

If the search intent isn’t right, no amount of technical SEO fixes, on page SEO and backlinks will help. Here’s how to get the search intent right…

There are 3 types of search intents:

- Navigational

- Informational

- Commercial

Navigation is when someone is trying to find something, such as Googling Facebook, they’re trying to find Facebook.

Informational is all about, you guessed it, the user looking for information on a topic. Such as, ‘How many legs does a horse have?’

Commercial intent is all about the user about to buy something or close-to making a purchasing decision. For example, ‘Buy PS5’, ‘PS5 review’. The user is in the market for a PS5.

We can dive deeper into each search intent, however, I’ll only be going over the commercial intent as it is the most profitable.

Recommended tool

A manual review.

How to

Let’s say the keyword you’re targeting is, ‘Spiderman action figures’, what type of content appears? Is it product pages, category pages, blog posts?

Let’s say category pages appear the most, then that’s the type of page you want to make in order to target the keyword ‘Spiderman action figures’.

Take a look at the category pages on the first page results. Are there lots of variations of spiderman action figures? If so, you will want variety within your category page.

See what’s already ranking for your target keyword The answer is on the first page of Google.

Another example would be for the keyword, ‘Are Samsung dishwashers any good?

Look at what type of page appears most in the search results. Let’s say the most common type of page for the keyword ‘Are Samsung dishwashers any good? Are blog posts.

What kind of blog posts are they?

Are these blog posts reviewing different types of Samsung washing machines and then giving their verdict? Are these blog posts formed of user generated content such as a collection of reviews?

When analysing the search intent, exploring the first page of Google will reveal the answer.

What if there’s no content around that keyword?

Let’s say the keyword(s) you’re targeting doesn’t have any competition, no one has created a page dedicated to it. You won’t be able to use the above to find the answers.

In this case, you’ll have to give it an educated guess. If it’s an informational type of keyword, it’s likely going to be a blog post. What kind of blog post, that’s up to you or your content creator to decide.

If it’s a commercial keyword, then it’s probably going to be a category page, product page or a review type of blog post. Do what you think is best.

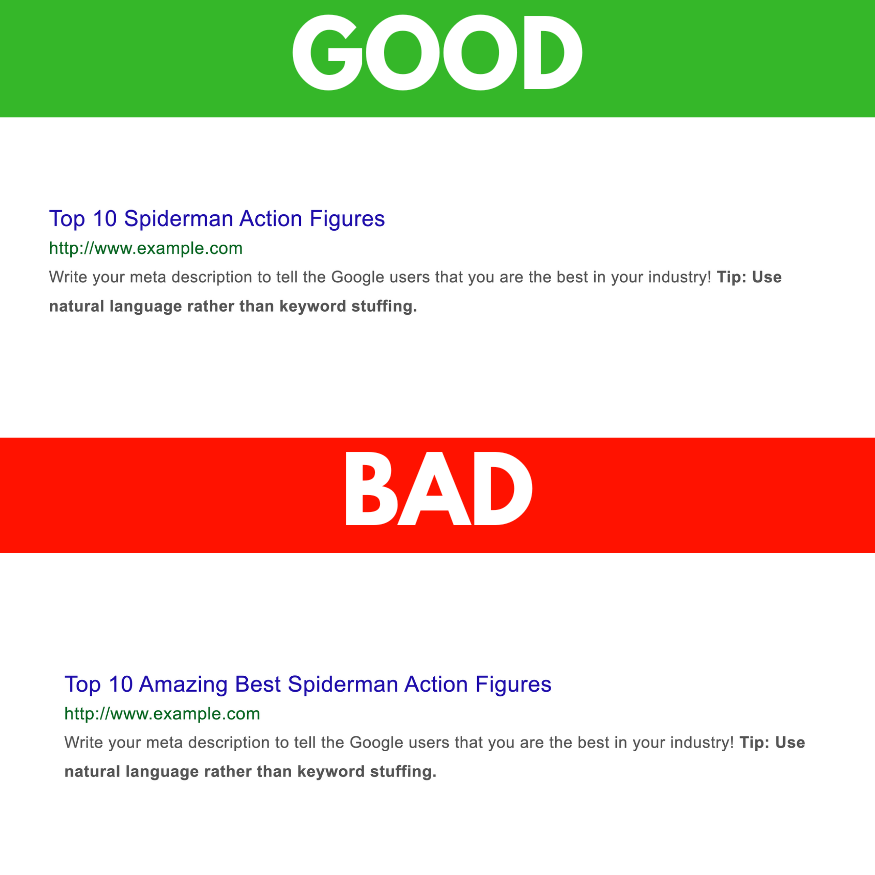

16. Is the primary keyword in the meta title close to the beginning

Having your keyword closer to the left of the meta title is a proven way to increase rankings. Not many people know this or take advantage of this.

Bad Example:

Top 10 Amazing Best Spiderman Action Figures

Good example:

Top 10 Spiderman Action Figures

As you can see the keyword is ‘Spiderman Action Figures’. The 2nd example has worked in all the words but rearranged them so the keyword is closer to the beginning.

Don’t expect this to yield massive results but it is another ranking signal.

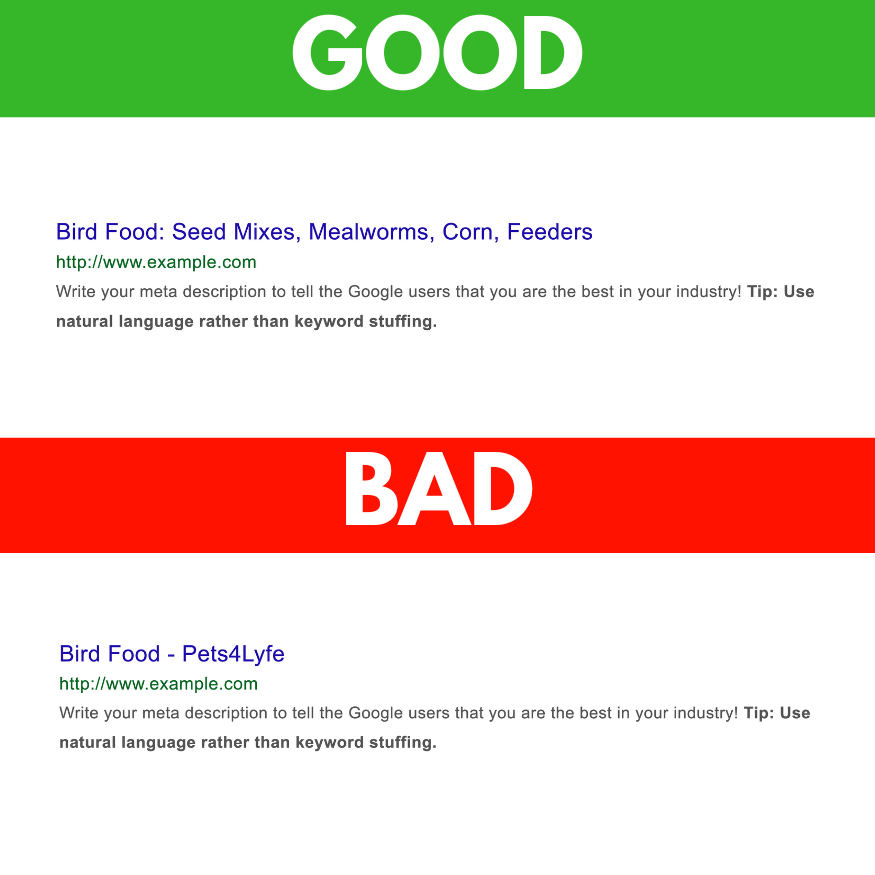

17. Are page titles descriptive?

Meta titles have a massive impact on rankings. That’s why it’s important to fit as many keywords into the title (as long as it makes sense).

Sometimes, page titles will have the target keyword in and then the brand name. When there’s no need for the brand name to be in there (unless that’s what the user is looking for) that space can be used for other keywords.

Example:

Target keyword: Bird Food

Unoptimised Meta title: Bird Food – Pets4Lyfe

Optimised Meta Title: Bird Food: Seed Mixes, Mealworms, Corn, Feeders

I went ahead and did a bit of keyword research and found that the above were the types of foods birds ate. And users were searching into Google.

Now this page has a higher chance of ranking for multiple keywords.

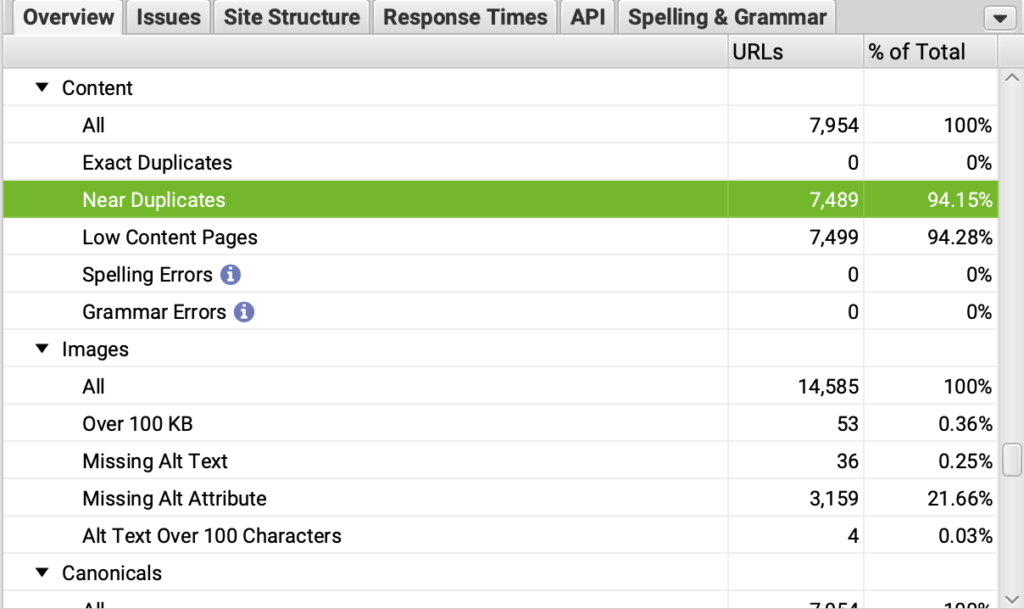

18. Duplicate content

Duplicate content is content that’s exactly the same which is available on multiple pages. This causes keyword cannibalization and as a result, rankings suffer.

It’s OK for content to be duplicate to a certain extent. For example, the Menus, they’re going to be the exact same throughout the website. The main duplicate content concern is content within the body.

Recommended tool

Screaming Frog

How to

- Run the screaming Frog Crawl according to the above.

- Once complete, go to the ‘Overview’ tab on the right, scroll down to ‘Content’, click ‘near duplicates’. and you can export it.

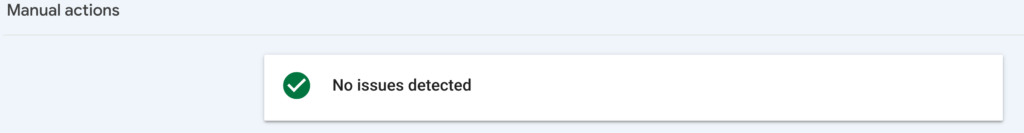

Penalty Checks

If you’ve got a penalty, your website will die unless you tackle the penalty as quickly as humanly possible.

1. Manual penalty

Manual penalties occur when someone at Google manually reviews your website and decides to give you a penalty. This in turn causes traffic to dramatically decline and can easily take months for the traffic to return to pre-penalty levels after the penalty has been removed.

Recommended tool

Google Search Console

How to

- Go into Google Search Console

- Click ‘Security & Manual Actions’

- Click ‘Manual Actions’ and if you’ve got a penalty it will appear here.

How to

If you’ve been unfortunate enough to be slapped with a manual penalty, you will be given the reason why.

The reason could varey such as:

- Unnatural links to your site

- Keyword stuffing

- Spam content

- And more

Now that Google has given their reason for giving you a penalty, you can work on fixing it. For example, If you’ve got a penalty because of your links, do a backlink audit and disavow those links.

Then you would go back into the manual penalty section and click ‘Request a review’ for somebody from Google to check it.

If they’ve lifted the penalty, it can easily take 6 months for your traffic to recover.

If you’ve been keyword stuffing, look into your content (including schema) to see how many times you’ve mentioned a keyword. You can use a tool such as Surfer SEO to help you with this.

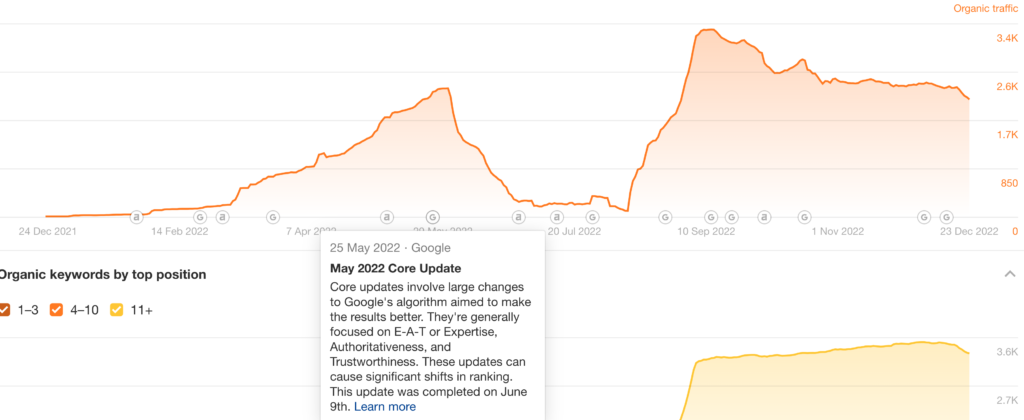

2. Check to see if there are any abnormal downfalls in traffic by comparing them to Google updates.

99% of the time if you’ve been hit with a penalty it’s likely an algorithm update. Google is constantly rolling out updates and unfortunately many websites get hit that shouldn’t have.

It’s unfair but that’s a part of the game.

Recommended tool

Ahrefs

How to

- Put your website into Ahrefs and you’ll see a graph which displays your estimated traffic. Under it there will be Google logos pointing out updates. Here, you can see what impacts rolled out and what they were about. So if there’s any drop in traffic you can see the algorithm update which may have played a part in it.

- When you hover your cursor over the logo, click ‘learn more’ to see more about the update.

- Check Google’s official documentation on what a specific update means.

Schema Markup Checks

Having structured schema markup can lead to a 270%+ increase in traffic. SOURCE

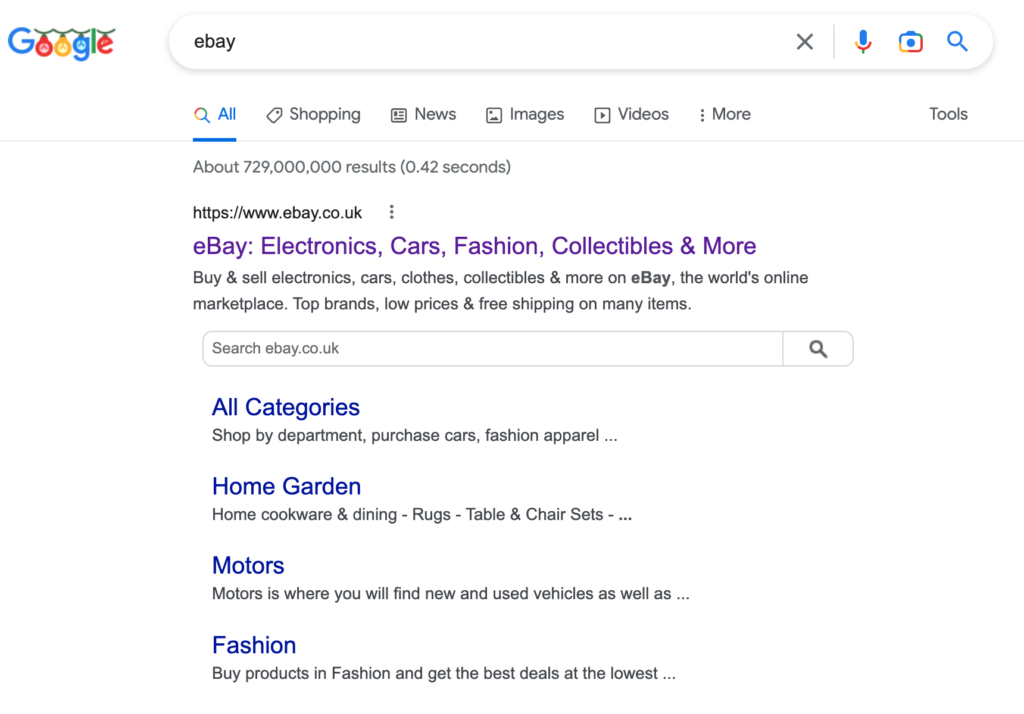

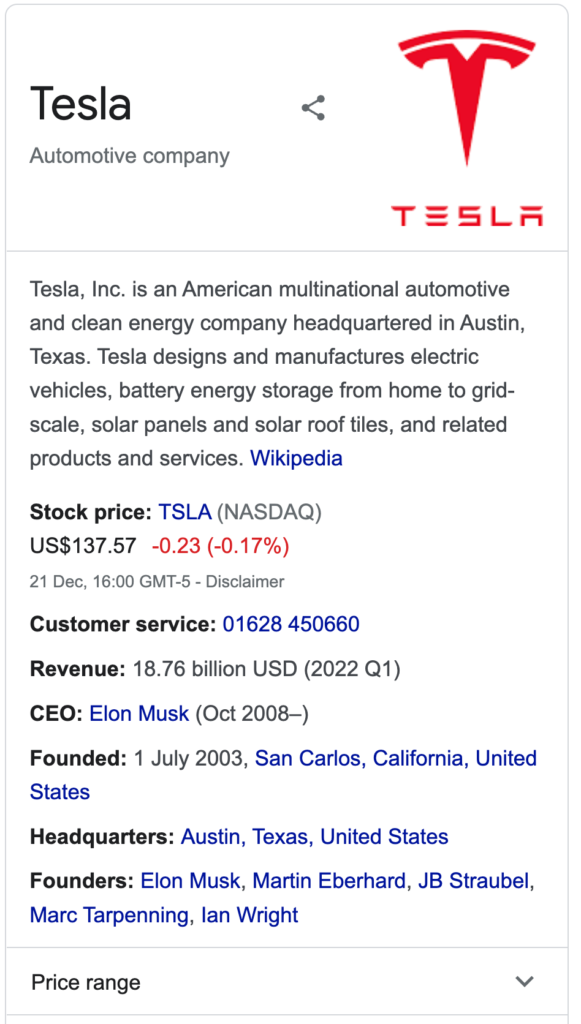

Schema Markup or structured data as it’s also referred to is a coding language which helps the search engines understand your content better. Schema takes it a step further by creating visual items on the search results.

For example, if you’ve got a review schema there will be stars which appear in your search results. Taking more SERP space, catching the user’s eyes and leading to a higher click through rate.

IMAGE

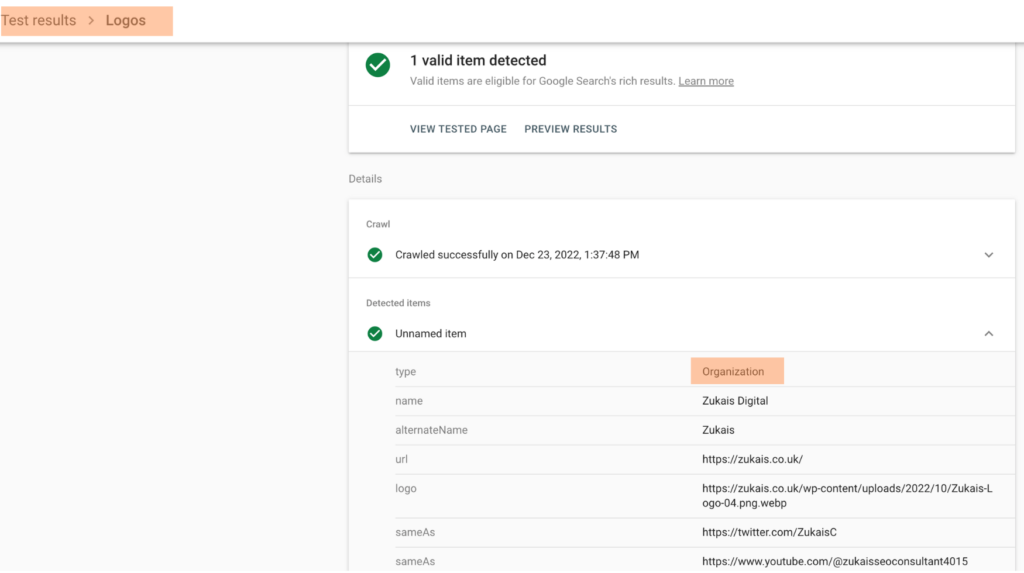

1. Does the site have organisation schema?

Organisation schema is an amazing way to help build trust with the search engine and build an entity.

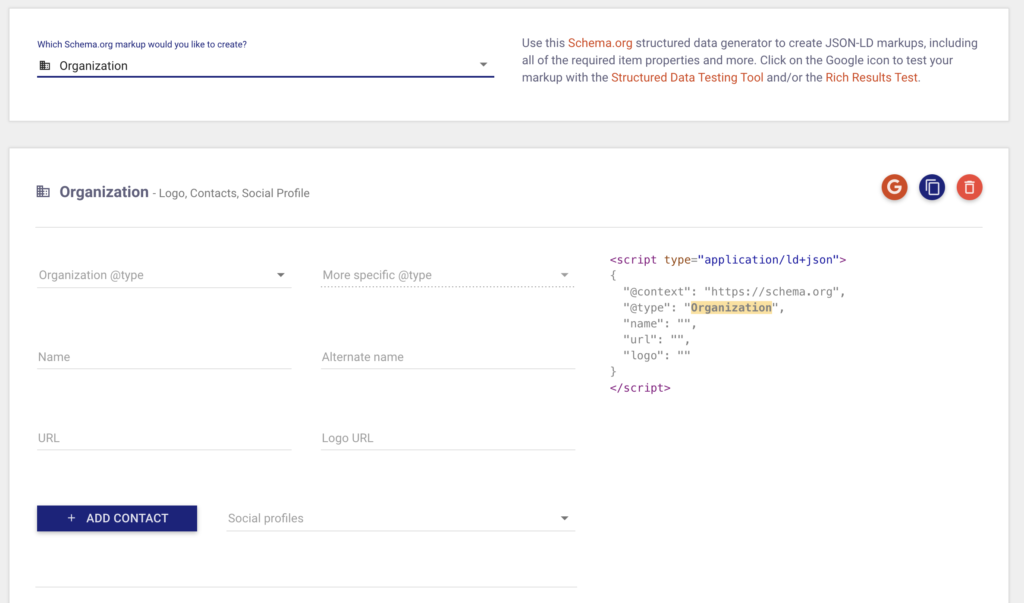

Recommended tool

Technical schema markup generator

How to

- Put your website into the Google rich result tester.

- Click on ‘Logos’ > ‘Unnamed item’, it should say ‘Organization’ next to ‘type’. If you can’t pull this up, it means you don’t have organisation schema. Follow the next step if you don’t have organisation schema.

- Click on ‘Organization’ in the technical schema markup generator and fill out the details. Copy the code and paste it into the home page only.

2. If the site is eCommerce, is there product schema?

Product schema will reveal things on the search results such as price, stock, number of reviews etc. This can increase click through rates and will improve the user experience and as a result, result in higher rankings.

Recommended tool

Technical schema markup generator

How to

- Put your product page into the Google rich result tester. There should be a tick box next to ‘Product Snippets’. If not, it’s a fail and you need to follow the next step.

- Using the technical schema markup generator, click on the ‘product’ option and use that schema as a base for filling out information on your product pages. (you’ll have to speak to your devs about implementing this)

3. Is there article schema?

Article schema is very straight forward, it helps the search engine understand what the article is about.

4. Is there review schema?

Review schema allows for stars to appear within your search result. Giving it more visual appeal may help increase the click through rate.

5. Is there job post schema?

Jobrapido received a 270% increase in new user registrations from organic traffic thanks to job post schema.

6. Is there FAQ schema?

FAQ schema is a simple way to get your website listed multiple times on the first page.

7. Is there how to schema?

8. Is there course schema?

9. Is there recipe schema?

Using the recipe schema gave Rakuten Recipe Group a 270% increase in traffic.

Brand Checks

This section is about making sure your brand is on point when it’s being displayed on the search engines.

1. Does the homepage appear 1st when searched?

When your brand is searched, the home page should be the first thing organically that shows up. If there’s a different website, there’s something wrong.

If it’s your social media page which shows above your website, that’s OK. As time goes on your website will rank higher.

How to

- Type your website into Google and if it appears first in the search results, you’re all good. If not, keep reading.

- Make sure your brand name is in your home page meta title.

- Build links to your home page (see my guide to get some initial easy links)

- Add your brand name a couple of times throughout your home page

2. Does the site have a knowledge panel when searched?

When your website has a knowledge panel, that’s when you know for certain Google views you as an entity. A real brand, separating you from the cesspool of websites on the web.

The knowledge panel or knowledge graph displays relevant information on the side regarding your website.

Celebrities have a knowledge panel, businesses, food, definitions. Essentially, information that Google is highly confident is accurate, they will serve in a knowledge panel.

How to

It’s not easy getting a knowledge panel. In fact, there’s no guaranteed way you’ll get one. There isn’t a Google property or piece of code you can use to get a knowledge panel.

BUT…

There are some things you can do which will help you increase your chances of getting the knowledge panel.

Organisation schema – As mentioned above, schema markup is extremely important. It’s going to help Google understand exactly what your website is about.

Social media links – You want to appear as an entity and one way to help with this is to simply create lots of social media accounts. This can be Facebook, Twitter, LinkedIn, Pinterest, Tumblr etc.

You want to create these accounts with a profile link back to your homepage.

A factful about page – When you read a knowledge panel, it’s very fact based. It talks about the organisation or person’s date of birth, origin and a bit about them. So make sure you have some quickfire facts about you and your company.

Author bio – if you’re trying to get yourself a knowledge panel then you need to appear to be a real person. Have yourself an author bio at the end of each blog post/article. Along with links to your social media.

Google My Business – Google My Business is one of Google’s properties. It’s a way for businesses to get more reach to customers. So if you have a physical location, sign up and put a link to your website on there. It’s free!

Technical SEO fixes – If your website is difficult to navigate, good luck with getting a knowledge panel. Make sure you have fixed things like broken pages, improved page speed, pages aren’t too deep to reach and more. Take a look at the technical SEO section of this guide.

Contact page – Would Google be OK with directing their users to a business that has no way for people to get in touch? Of course not! So make sure you’ve got a dedicated contact page and you link to it in the menu.

Authority backlinks – Backlinks from websites related to yours will help massively if you’re trying to score a knowledge panel. And I’m not talking about low authority sites. But sites such as the BBC, pets.com if you’re in the pets space etc. A wikipedia page would be a massive win.

3. Are there any negative reviews?

You can have an unlimited marketing budget but negative reviews will kill conversions.

How to:

- Google your brand

- Are there any negative search results? These can be negative Google My Business reviews, negative Facebook and Trustpilot reviews which may appear on the first page.

- Google [YOUR BRAND review] if that’s applicable. Are the people talking about you or your product doing so in a positive manner?

- Make a note of the first page results talking about your business negatively. Comment on them if you can and try to resolve the issue.

- With Google My Business negative reviews, you can order positive reviews from websites like Legiit.

4. Are there any negative Google Auto Suggests?

If people are seeing negative Google auto suggestions of your brand, they will automatically see it as a red flag.

As you’re Googling, there may be an autosuggest which hints at you being a bad brand. For example someone may type [YOUR BRAND] and it might autosuggest [SCAM]. For example, ‘Revitol scar cream scam’.

How to

- Type your brand into Google and if there are any negative auto suggestions. If not, great. If there are, click on them. Get in touch with the webmaster and ask if there’s any way you can rectify the issue.

- If they respond by saying no or you get no reply, there’s nothing you can do about it. Except for improving your product and customer service.

5. Does the site have a Favicon?

It’s like the cherry on top.

A favicon is an icon which is displayed on the tab. It’s a simple way to get your logo on your website. If you don’t set the favicon icon yourself, the CMS such as WordPress will display its own logo.

Which in turn will make your website appear unprofessional. Using a favicon is kinda like using your domain’s email address over gmail. It makes you appear more professional.

Desktop VS Mobile Checks

1. Does the mobile navigation have the same links as the desktop navigation?

The mobile and desktop version of your website should be in sync with each other. There should only be a single version of your website and not multiple.

You don’t want different navigation links on the mobile version of your website compared to the desktop version. What’s the point in making the navigation different?

How to

- Use your phone and desktop to compare the navigation. If the links are the same on both mobile and desktop, you’re good. Compare across multiple types of pages such as home, category, blog post etc.

2. Does the same content / Visuals load both on desktop and mobile?

Sticking with making sure your website is consistent on both mobile and desktop, the visuals / content should be the same. Otherwise Google may recognise them as different websites.

How to

- View your website on your phone and compare it to the desktop version. Read some of the content and check out the visuals. Are they the same? Of course the placing of the visuals will be different because of the drastic change in screen size but the content should be the same.

- Check different page types such as categories, blogs, products etc.

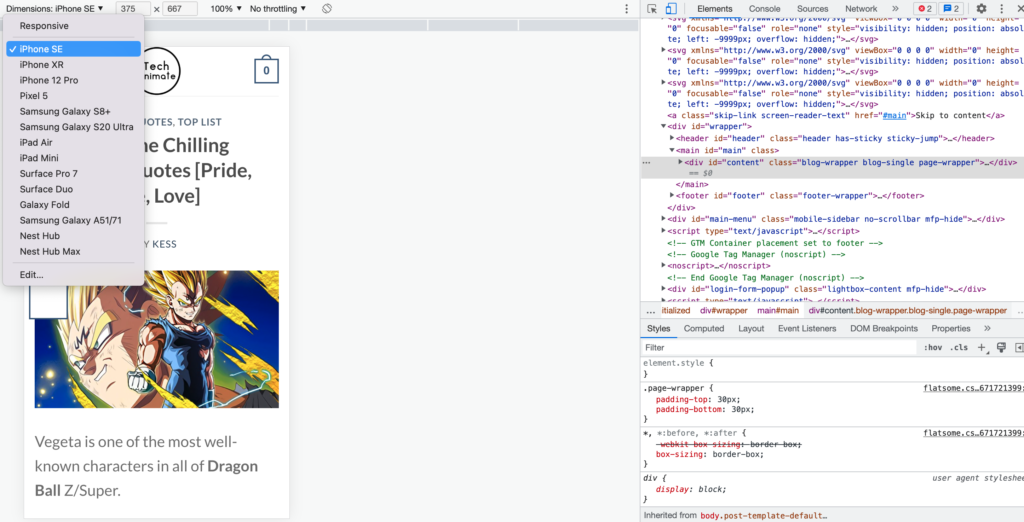

3. Does the site correctly load on devices such as iPad, iPhone, Pixel etc?

If your website does not adjust to any phone size, it will never rank.

There are so many different types of phones out there and that’s the reason why your website must be able to adjust to any screen size.

How to

- On your website, right click and click on ‘Inspect’

- There will be an icon which looks like a phone and tablet. Click on that.

- Use the drop down menu to view your website from different phones. (this method isn’t always an accurate representation of what your website looks like from a particular device. That’s why it’s best to use a physical phone)

4. Does the site have separate mobile versions or does it work responsively?

As mentioned, there should only be a single version of your website. We’re not in the 90s where there’s a separate mobile version and desktop version.

If you have a separate mobile version, get back into [CURRENT YEAR].

Page Type Checks

Page type checks are making sure each type of page includes all the necessary things it should for both SEO and conversions.

1. Homepage check

- Is the home page targeting a keyword and is the target keyword in the meta title and H1 tag?

- Are there links to important pages you want to rank?

- If a user were to look away from the screen and back at it, would they know what the website is about?

2. eCommerce page check

- Category pages should be indexable except for empty categories.

- Choose a target keyword and make sure it’s in the meta title and H1 tag.

- Make sure the child categories link to the parent category.

3. Service page check

- Include the target keyword in the meta title and H1 tag.

- Make sure the copy has been formatted (Break up the text, include the benefits of your product/service, multimedia etc.)

4. Product page check

- Unique copy (include the benefits of the products and not just features)

- Multiple high quality images.

- Include a video tutorial if applicable.

- Reviews

- Shipping information

5. Blog post check

- Exclude the date (unless the page is targeting a time sensitive query such as ‘what time is Tyson VS Joshia on?’

- Include an author bio

- A featured image which conveys at first glance what the page is about.

- Format the post (break up the text, lots of multimedia, suitable text size etc.)

- Link to relevant blog posts

6. 404 page check

If there’s a broken page on your website, you want to make sure it technically gives you a 404 status error.

Recommended tools

How to

- Type something random in the address bar e.g. yourwebsite.com/wbfiu

- You’ll land on a page which doesn’t exist and it should be a 404. The extension should say 404.

Here’s what to include in your 404 page:

- Search box

- Links back to helpful resources such as contact, home or about page.

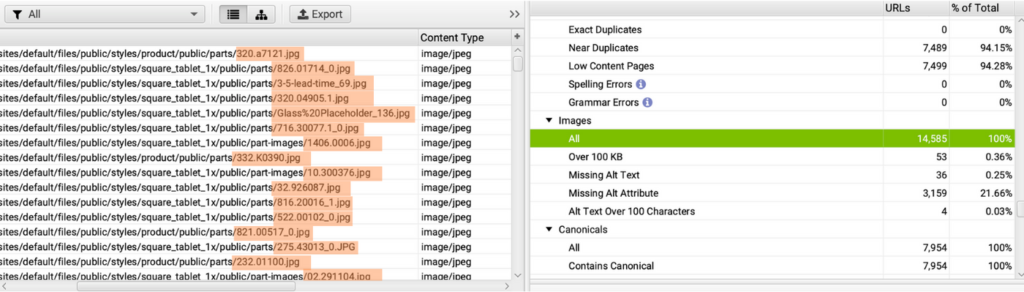

Image SEO Checks

If you own a retail business, image SEO is CRITICAL to your success.

Let’s say you sell Adidas trainers, if you were to Google that term images would appear. People interested in Adidas trainers will click an image and consider making a purchase.

So you can’t afford to miss out on image SEO.

1. Are image files named?

If you want to rank your images, it’s critical your head keyword is in the image you want to rank.

Recommended tool

Screaming Frog

How to

- Run the Screaming Frog crawl, click on ‘All’ under ‘Images’.

- Under the address bar you will see the URLs of the images. In the last ‘/’ you will see the image file name.

If you see URLs like /78345dvr then your file names aren’t being named properly.

For now on, name your images before uploading.

When it comes to images you’ve already uploaded, you need to prioritise which one you re-upload with the file name. Prioritise by pages that have the most potential, most impressions, traffic and conversions.

2. Do images have alt tags?

What is an alt tag?

An alt tag is an html tag. If the image fails to load, the alt tag will appear to help the user understand what the image is. It’s also there to help visually impaired people understand what the image is.

Google uses it to better understand the image and that’s why you shouldn’t skip it.

How to

- After the Screaming Frog crawl, click on ‘All’ under ‘Images’.

- Click on ‘Missing Alt Text’ under the tab.

- Click on ‘Image Details’ at the bottom. Scroll over to ‘Alt Text’ and you’ll be able to view the alt text.

- Export the image alt text by clicking on ‘Bulk Export’, ‘Images’, ‘Images Missing Alt Attributes & text links’.

Now you can see the images without alt text and adjust the alt text accordingly.

EEAT Checks

EEAT stands for Experience Expertise, Authoritativeness and Trustworthiness. It’s a ranking signal Google uses to determine the quality of a page. Google created EEAT to try and separate low quality websites from actual resourceful websites.

EEAT has become one of the most critical things in search engine optimisation today.

1. About Page